Imagine you are someone very senior in communications for the higher education subset of the Department for Education. You’ve got a range of things to say about the Teaching Excellence (and Student Outcomes) Framework, none of which are particularly digestible to your average generalist education correspondent but all of which are important.

You plan a midweek launch, considering using specialist press (like Wonkhe) to ensure the detail is captured. This idea noted, you take off for a well-deserved relaxing weekend with your family and friends.

Spin it to win it

But, at 8am on Sunday morning, the phone rings. It’s Number 10 – the prime minister is taking a media pasting via selective leaking of backbench and ministerial hostility. It looks like yet another week of blue-on-blue headlines, with speculation about a leadership challenge. And Boris will probably say something. Bloody Boris.

So here’s the plan: to a backdrop of stories that show a government totally getting on with governing, issues that matter to hard-working families and so forth, the PM will call for unity and highlight the ridiculousness about the way her critics are behaving. One of the number 10 wonks has drafted a great “personal” opinion piece and The Sun is interested. What have you got for the backdrop?

Your TEF announcements come to mind – you can show action addressing one of the five big HE stories that always get something on page 4 of The Times. Mentally, you tick them off – free speech and snowflakes (no mention in the documents), tuition fees (parked till Augar reports, which won’t happen till the ONS report…), unconditional offers (nothing in the documents), mental health (nothing in the documents), grade inflation…

Grade inflation. There’s definitely something about grade inflation in the consultation response. It’s a metric in TEF, isn’t it? By 8.30am you are on the phone to a tired and grumpy Sam Gyimah, running some basic boilerplate text past him for his quote. Brilliant. “New measure to tackle grade inflation at university” – strong stuff. Bang it out. Subject TEF will review evidence of grade inflation – consequences for universities. Everyone is happy. Bang it out. Time for breakfast.

Never mind that the consultation response agrees that measures for grade inflation were unhelpful at subject level, that the sector is opposed to their inclusion, and thus they will – as planned – only feature in the provider-level TEF.

Reality used to be a friend of mine

Kind of ruins the story, doesn’t it? It’s not often that you get a press release that is diametrically opposed to what the documentation you are releasing says. But these are strange times in government. We covered the use of the grade inflation measure in provider TEF earlier this year, and from what we are led to believe it was not widely used by assessors or panel members during that exercise.

But there are plenty of other interesting pegs that a release could have been hung on. Year two of the Subject TEF (STEF) pilot seems to be akin to that bit of wallpaper behind the sofa that you test paints and cleaning products on – all kinds of modifications and processes are being trialled here and might eventually make their way to to the rest of the room.

Hello DLHE?

The addition of the Longitudinal Educational Outcomes (LEO) data to the main TEF metrics has long been expected, albeit with some trepidation. For the STEF pilot, at least, it will replace the more general of the two Destination of Leavers from Higher Education (DLHE) metrics – the DLHE “highly skilled” metric will remain alongside the interloper.

This decision is undermined by the decision not to include regional benchmarking. Institutions in less well-off areas pumping highly skilled graduates into the local economy would not expect said graduates to be well remunerated if they stay local. London residents would be expected to be paid better whatever their educational attainment. It would be astonishingly easy to control for it – this is administrative data after all and is split by region of residence.

We could also note that DfE appears not to have realised, or compensated for, the fact that DLHE will very soon be replaced by Graduate Outcomes, with the first of the new series starting data collection this December. HESA has been very clear that there will be no continuity or comparability between DLHE and GO (as we are not allowed to call it). So quite what data would be used for future TEF is unclear.

Intensity fails to intensify

Grade inflation has been a fairly contentious metric – the proposed teaching intensity measure has caused actual fights. Overwhelmingly disdained by the sector in any of the proposed configurations, 76% of respondents not liking it means the idea will take on a life outside of TEF.

But we do get two new metrics entering STEF – student responses at consultation events have led to the inclusion of measures on “student voice” and “learning resources”. All drawn from the National Student Survey (NSS). All NSS metrics will retain their half-weighting, as currently. We don’t yet know which NSS questions will make up the new metrics – these details may follow in the STEF year 2 pilot documentation, but smart money is on Q26 (Student Unions – an oft-misunderstood question) not playing a part in any “student voice” measure.

It may be that these two new measures are the two into which the current “student engagement” criteria will split. The response isn’t clear on this, but the split is happening again, thanks to student responses.

Another addition is a trial metric on differential attainment. This will look at student awards (first, upper second, and so on) against their background. If you’re wondering how this ties into the grade inflation metric (that looks at one end of this) and the absence of regional benchmarking we’re equally puzzled by this decision. It feels a little bit like the “value added” box that Learning Gain was supposed to tick.

I hear you like making TEF submissions…

If you loved writing your institutional TEF submission, you’ll be overwhelmed with joy at the thought of writing one for each of 35 CaH level two subject areas. Backed by a lot of language concerning DfE choosing “usefulness for students” over “reducing burden” this still represents a hell of a lot of submissions, however you cut it.

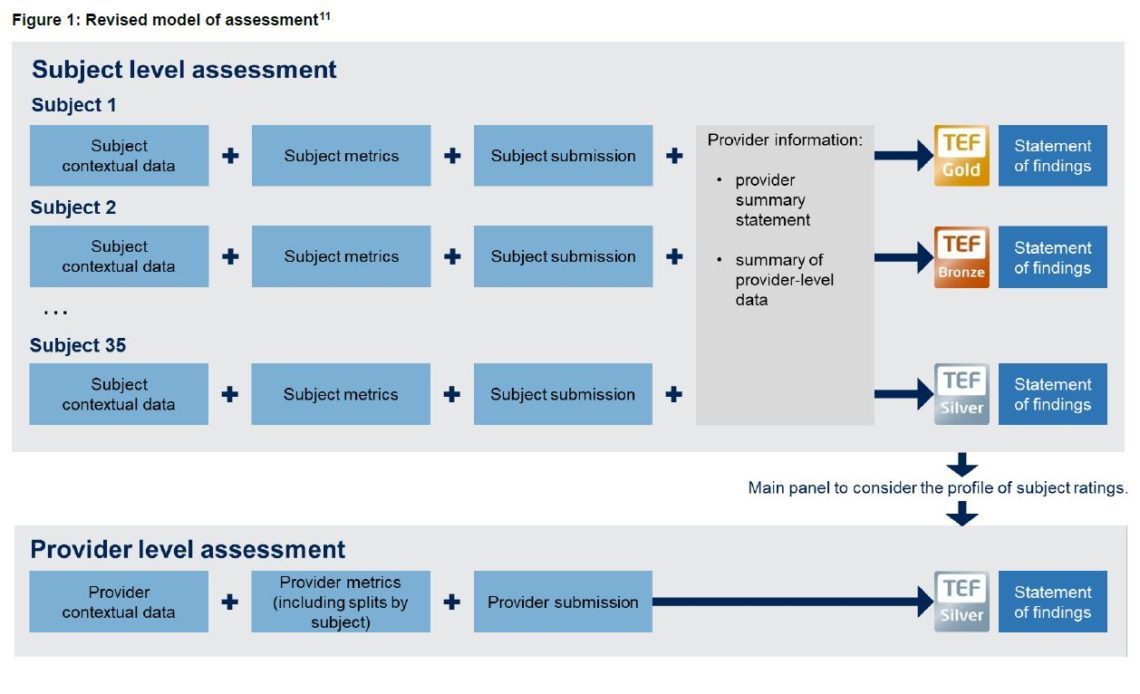

This of course, is on top of the existing provider submissions. Provider TEF is currently seen as running parallel to, but informed by STEF, as this useful diagram from the response demonstrates:

The decision between “top down” (STEF by exception where metrics indicate a difference from the provider rating) and “bottom up” (provider TEF constructed from STEF building blocks) has come down firmly on the side of “a bit of both” – a hitherto unexplored option that appears to mean running two similar exercises using similar data in near isolation from each other.

There’s a little bit of respite – STEF will run only once every two years, and awards will last for at least four. The final decision on award length has been pushed back to the end of the year two pilot. STEF is only available for subject areas within institutions that have more than 20 students registered to them (though OfS initially suggested 40, this was felt to exclude too many students at the expense of statistical integrity – as a superbly nerdy table in Annex C demonstrates. And if you like it when DfE gets into scraps around the use of statistics, the decision to make use of data with 90% confidence levels should start some interesting hares running,

Under review

Of course, all of this is just a pilot exercise. Your institution can join in as one of 50 test sites, should you feel inspired by what you have read above. And the results of the pilot will inform the design of the final STEF, should it – or indeed, TEF as a whole – survive the statutory review that will run alongside the pilot year.

Everything is up for grabs in the latter – including a chance to be rid of the precious metal level demarcations. The reviewer will be appointed by the end of this year and will report by the end of the next.