Some wonks may remember an addictive computer game called The Incredible Machine, in which the player has to construct increasingly convoluted machines to achieve specified goals. The idea of nudging a basketball into a rubbish bin using a set of bellows may stand as a metaphor for contemporary policy design.

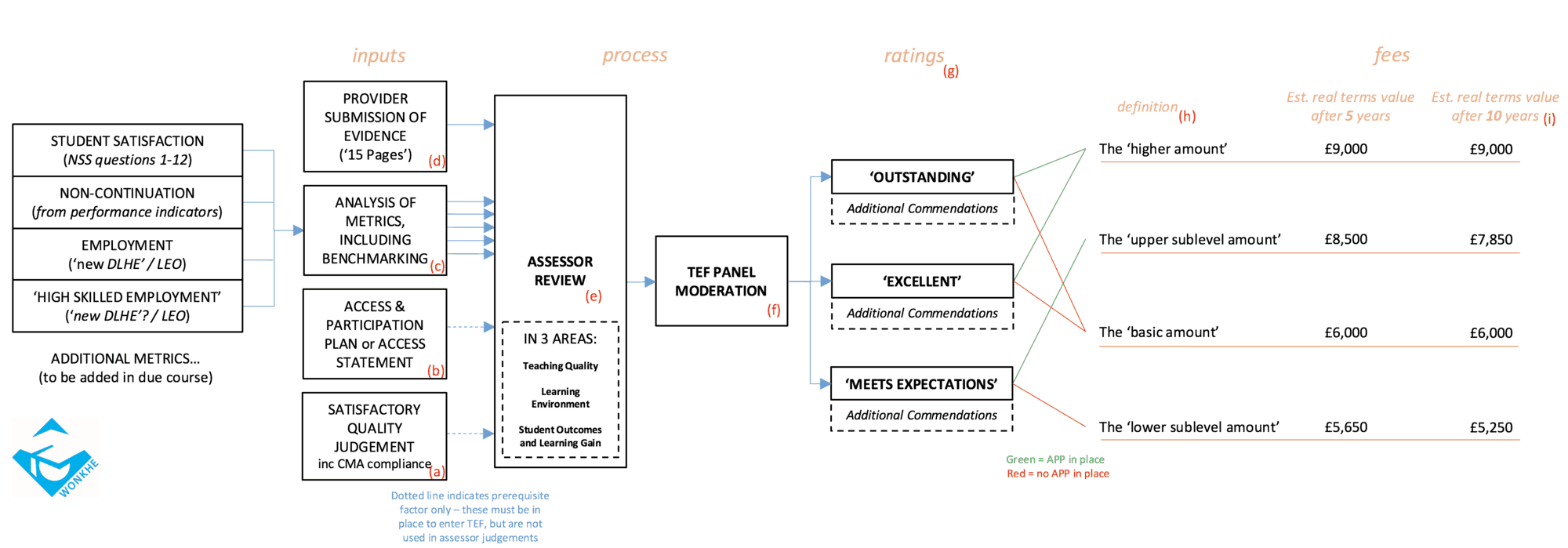

Since the TEF was unveiled last year, we’ve been working to understand its various inputs and outputs of the new framework. Our first version trying to visually explain the TEF following the Green Paper was here. Now with the White Paper published and further details of the TEF known, we’ve decided to update our Incredible Machine.

This is an attempt to represent the TEF after five years of operation. Hence we do not show incremental differences over the introductory period. For that you can see our timeline for rollout here.

To open the diagram in a new window, click here.

Notes

a. It has been confirmed that achieving one of a range of positive quality judgements will be a prerequisite of TEF entry, although by 2020 it is anyone’s guess what the quality judgements will look like, as the review methods involved will inevitably change. In particular, the white paper indicates that the issue of compliance with Competition and Markets Authority guidance will be ‘built in’ to future quality assurance review

b. It has also been confirmed that there will be two possible ways for a provider to demonstrate access and participation credentials in the TEF – either by holding a formal ‘Access and Participation Plan’ agreed with the OfS, or by submitting an ‘Access Statement’ on its work in this area.

c. Metrics in relation to student satisfaction, non-continuation and employment outcomes will feature, as expected. The employment outcomes metric will be complemented by a second metric for ‘high skilled’ employment, and it is likely that both will be underpinned by a revised DLHE survey and by a new ‘Longitudinal Employment Outcomes’ dataset derived from student loan and tax records. Further metrics – e.g. on contact hours – may be included by 2020, but this is not confirmed.

d. As expected, providers will be able to submit an additional portfolio of evidence, in a prescribed format (possibly a very prescribed format!).

e. All of these elements will be considered at first instance by a team of Assessors, individually at first and then in groups. It can be presumed that not all Assessors will be asked to look at all entrants, so it will be interesting to see how the allocation is done. Assessors will review each entrant in three areas: Teaching Quality, Learning Environment, Student Outcomes and Learning Gain.

f. Assessor reports will then be sent to a single main TEF Panel to be moderated, then revised or confirmed. This panel will be comprised of people from the pool of Assessors, and will have an independent chair appointed by the OfS. It will not be possible for the Panel to decline to give a TEF rating if the quality threshold is formally met.

g. There will be three possible TEF ratings: ‘Meets Expectations’, ‘Excellent’, or ‘Outstanding’. In addition, commendations may be awarded for distinction in particular areas, regardless of the overall rating given. It is important to note that to achieve ‘Excellent’ overall, a provider must be deemed ‘Excellent’ in all three areas of review – so this may be quite a high threshold. The same logic follows for ‘Outstanding’.

h. Different combinations of TEF rating and access document will determine the level at which a provider’s undergraduate fees may be capped. We have used the descriptions for different fee caps from Schedule 2 of the draft legislation. We have not shown all the detail here, as it is also possible for a provider to only be permitted to charge a ‘floor amount’ if it has no TEF rating at all – but this will be unusual given the near-default status of a ‘Meets Expectations’ rating.

i. If the policy set out in the white paper is adopted, then our estimated cap levels would follow (assuming an average path for RPI-X over the period of 3% per annum, discounting by the same values, and rounded to the nearest £50). It should be noted however, that in the legislation as drafted, nothing compels ministers to use any kind of index in determining the position of the sublevel caps compared to the others (the other caps can only be varied with indexation or varied by Parliament). In any case, it will be seen that over time, significant variation of incomes from fees may arise, and that providers must maintain an ‘Outstanding’ rating continuously to preserve the value of their fee income in real terms. See a breakdown of the value of these rewards in our analysis here.

This article was amended at 2.40pm on 26th May 2016. We originally wrote that it could be technically possible for a panel to decline a TEF award, but this will not be possible if a provider has met the formal satisfactory quality judgement.

In the mid-1970s the A level General Studies papers of the now long defunct Northern Universities’ Joint Matriculation Board (aka “JMB”) had a diagram of a Victorian-style ‘incredible’ mechanical machine, full of levers, wheels and cogs connected as a system. Candidates were invited to answer questions such as “what happens to Wheel E if you move Lever B through 90 degrees anticlockwise?”, etc. These papers must be archived somewhere for posterity – it would be interesting to see what they look like again.

We could set another paper for the modern day with the “TEF Incredible Machine” – “What outcome is possible if Metric X is above benchmark but Metric Y is below and the university is not in the Russell Group?” ….

Chances are that the JMB examiner-designed system was better worked out and more determinstic …