This page is now out of date following the publication of the Higher Education White Paper. Find our updated version here.

Some wonks may remember an addictive computer game called The Incredible Machine, in which the player has to construct increasingly convoluted machines to achieve specified goals. The idea of nudging a basketball into a rubbish bin using a set of bellows may stand as a metaphor for contemporary policy design.

Since the Green Paper was released under embargo yesterday, we at Wonkhe have found that one has to read it over and over again, with a set of multi-coloured post-it notes on hand, to really get to grips with the Teaching Excellence Framework proposals it contains. This is because the many different bits and pieces of TEF architecture it describes are scattered throughout the document without any logic, and means are jumbled with ends.

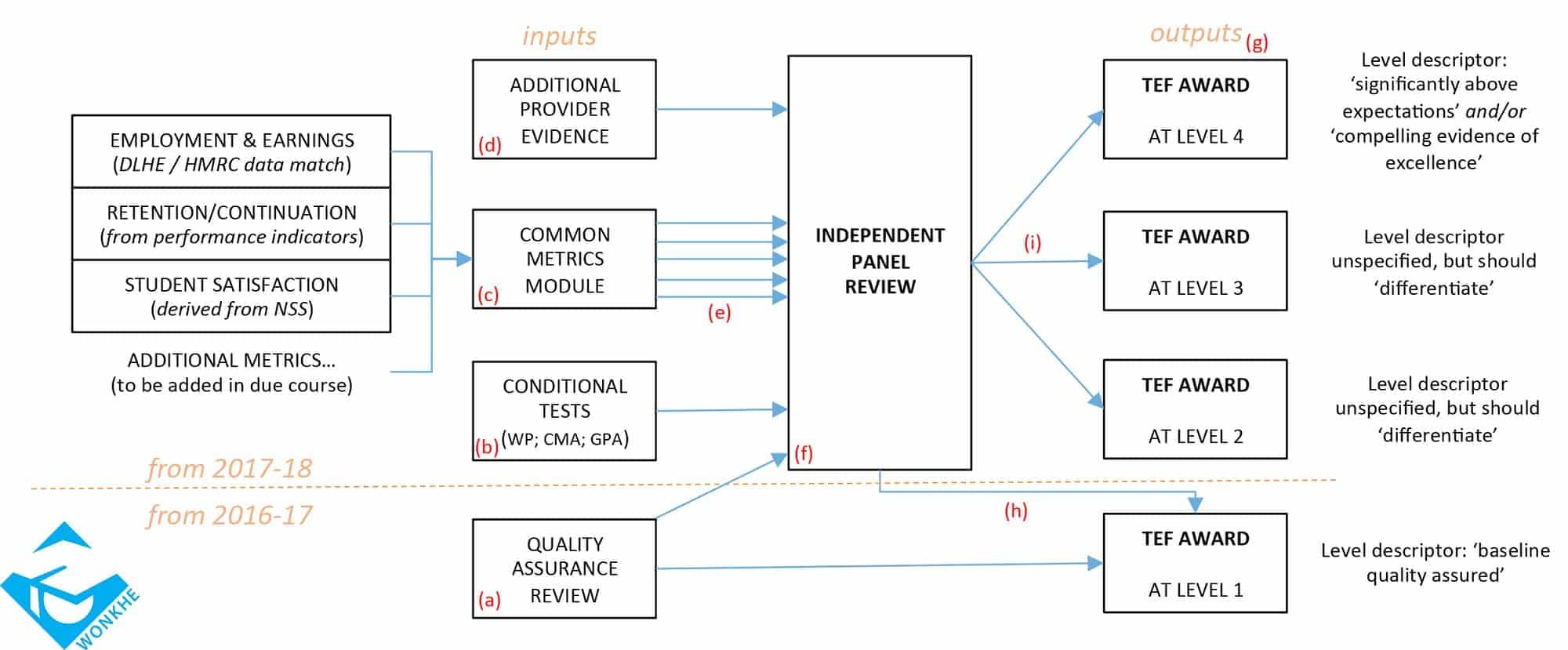

As the government hasn’t thought it beneficial to provide any visual plan of the overall design, we will make the first attempt. Our interpretation sees the TEF as a modular machine, with inputs, processing, and outputs – and each set of components is associated with different rules and conditions. The exercise has helped us to understand what is being proposed, and hopefully it will help others, but we are also happy for this to be ‘version one’ and eagerly invite crowdsourcing of improved diagrams, either based on ours, or completely different approaches. The process has also raised new questions about the TEF that haven’t emerged in the commentary thus far, and these can be fed into the consultation.

The main lesson is perhaps that the TEF may prove to be an ‘incredible’ machine in both senses of the word.

Click here to open the model in a new window.

KEY (with references to the Green Paper in parentheses)

The inputs:

a. In 2016-17, a satisfactory quality assurance review from QAA, ISI for course designation or equivalent, in place by February 2016, will lead directly to a TEF Level 1 award (Chapter 1, Paras. 26,27). Candidacy for these awards will not go to the Independent Panel, as far as we can tell. Those who reach TEF Level 1 in 2016/17 will be allowed to raise their fees along with inflation in 2017/18.

b. Applications for higher TEF awards will be subject to three ‘pre-conditions’ that will be assessed by the Independent Panel:

- The provider will need an Access Agreement or similar device (C1, P19)

- The provider will need to show it is compliant with ‘market practice’ guidelines set out by the Competition and Markets Authority (C2, P3)

- The provider will need to state whether or not they use a Grade Point Average assessment system (C1, P40); but note the requirement is only to state their position, and actual use of such a system is not to be a ‘prerequisite’ for higher TEF awards

c. Applications for higher TEF awards will be informed by ‘common metrics’ initially drawn from a set of three (C3, P12) to include measures of employment and earnings (starting with DLHE but going on to use data from the HMRC data match), retention and continuation (from HESA’s performance indicators), and student satisfaction (derived from the NSS). These metrics will change over time; in particular, note the NSS is itself under review and several suggestions for others have been put forward (C3, P14). As metrics will presumably change every year, and providers will be on different assessment cycles (no ‘gathered field’ as in the REF; C2, P6) then they will routinely be judged using differently constructed common metrics depending on when they are assessed or re-assessed – they are therefore not really ‘common’ metrics at the point of use.

d. Providers will be able to supplement these metrics with additional evidence, both quantitative and qualitative (C3, Ps. 13, 17), of various types.

e. Reporting of metrics will be dis-aggregated by student background (C3, P4) to show performance in the context of student profile differences.

The processor:

f. Applications for higher TEF levels will be conducted by an Independent Panel, comprised of ‘academic experts in learning and teaching, student representatives, and employer/professional representatives’ (C2, P9); note, there is no proposal to include provider representatives on this panel. The assessment framework will include

- teaching quality;

- learning environment;

- student outcomes and learning gain;

…and various sub-factors are also sketched (C3, Ps. 5,7,8,9). Re-assessments are envisaged to take place on a 3-5 cycle, with trigger events for sooner re-assessment (C2, P5).

The outputs:

g. TEF award level one might best be described as ‘baseline quality assured’, as it is dependent only on the QA review input module. The Independent Panel will make higher TEF awards at either two or three additional levels. Levels 2 and 3 are not further defined, but are indicated to be ‘differentiation levels’ (C2, P15). Level 4 is further defined as ‘requiring performance significantly above expectations’ and/or ‘compelling evidence of excellence’ (C2, P15). This implies that providers can win the ultimate TEF prize by being “better than they really ought to be – if you know what we mean”, and that any provider who doesn’t get to Level 4 may be deemed “excellent alright, but not quite compellingly so – if you know what we mean”. The problem is that we don’t know what they mean.

h. Presumably, it will also be possible to fail a panel assessment and get pushed back out with a Level 1 award, though this isn’t explicitly stated.

i. At some point it is envisaged that these award levels may be given differentially for different subject areas within all providers and that these would then be aggregated to form an award for the provider as a whole (C1, P23); multiple independent panels would then be formed, presumably feeding into a ‘lead panel’ of some kind – needless to say, we haven’t even tried to put any of this in the diagram.

NHS commissioned courses in HE eg nursing and allied health have had a metric based quality assurance system ‘TEF’ for years. It has been used as the basis of provider exit, competitive tender and driven employer- led market in health care education. Maybe some learning here for the sector from the better bits and the mistakes

It’s still a mystery to me how teaching relates to graduate earnings. I have not seen one study that relates teaching quality (or student experience, for that matter) to graduate earnings.

The even more ridiculous and illogical assumption with schemes like this (and we’re getting something similar in Australia – or at least suggestions of it) is that graduate earnings are predictive of good teaching. This is really the reason for these setups, right? To give the customers (we used to call them students) some sort of “informed choice” in their consumer decisions?

I can save them time. Universities that enrol the richest, best-educated students with the most cultural capital tend to have the richest, best-educated graduates with the greatest capacity to leverage “success” in an unequal world.

That’s it.

The real trick is to choose the right parents in the first place.

This is a sound summary of the document. What is interesting is how much of the Green Paper is not about TEF and is not really addressing current HEIs. Much of it concerns speedier access to university status for new providers, perhaps without a campus and maybe with fewer than 50 students. I feel these proposals should have been put in a different document as too often in reading the Green Paper, it is as if the authors have forgotten about TEF and go on about new providers only to think, ‘sorry, yes, this is supposed to be about TEF, so here is a little more on that, but about new providers ….’.

The risk is, given the request for input, if you comment just on TEF it appears as if you are tacitly supporting the new provider proposals and vice versa, which may be used to suggest support for one or the other or even both, when in fact that is not genuine. What is interesting is that the report effectively shrugs whenever there is any issue on what good quality teaching looks like. It suggests, in part, that it will come from student satisfaction. However, we already know that students tend to favour the easiest path for them to get the highest grades and often that will actually be an approach that is contrary to the best teaching and especially the best learning, whether for further use in an academic context or in employment. At present despite the statement that they do not want to suppress innovative teaching, this may be the effect because it tends to be challenging for students and thus unpopular with them.

Current surveys are about satisfaction and popularity rather than quality. However, in our current society these three are seen to be identical or at least guaranteed to inform each other.

Good observations Keir. I agree, much of the Green Paper is concerned about marketisation of HE to new providers and a how to guide for QA. It also blurs important areas like Widening participation, equality and diversity and doesn’t reference research led teaching at any point. I think its important to respond to the GP though, and voice concerns. It’s time we all pulled together.