Why doesn’t OfS care about community?

Jim is an Associate Editor (SUs) at Wonkhe

Tags

It’s not so much the removal of the “summative” question – it’s been clear that that was going (at least in England) since the government issued its weird “downward pressure on standards” edict in 2020.

It’s not that OfS has dropped previous commitments to publish a national analysis of the free text comments, to pilot an all-years survey, or to develop measures to regularly review the impact of the survey on institutional behaviours and the student experience.

It’s not the clustershambles that is the question on students’ unions – which uses HEFCE-era jargon to ask how well students think their union represents their “academic interests”. How has OfS managed to make the question with the highest “neither agree nor disagree” score in the survey even more confusing?

It’s not the repeated announcements that students will be “asked about mental wellbeing services” when in fact they will be asked only about the communication of those services. That a collective response of “we knew all about them, and they were crap” would generate a high score may be a new low in survey design for a regulator that used to be obsessed with outcomes, but on mental health can’t even seem to design a question about outputs.

It’s not the inclusion of a question on freedom of expression. I guarantee that will generate results that show that students who are usually subject to discrimination feel less likely to feel free to share opinions and views, generating a case for EDI work to enable them to speak that OfS’ eventual guidance on free speech will almost certainly ignore.

Nor is it the complete absence of mention of postgraduates, despite two pilots that followed OfS’ Conor Ryan taking to this site over four years ago to proclaim that the voice of PGT students is “not well represented when it comes to strategic thinking in HE or in wider policy development”.

It’s the complete removal of the learning community questions.

Back in the 2014 review of the survey, community emerged as a key theme from consultation, and only 13 per cent of students disagreed that questions on learning community should be included – and so following some positive cognitive testing, since 2017 students have been asked whether they “feel part of a community of staff and students”, and whether they have had the “right opportunities to work with other students”.

We know there’s a strong correlation between responses and student mental health, and our belonging research reminds us of how important course level community is to outcomes.

But the two questions are to be removed. OfS didn’t mention the prospect in the Phase One review in March 2021, we were given no rationale for the omission of the questions from the 2021 new question set pilots, and the removal of the questions wasn’t explicitly proposed or consulted on in the July 2022 exercise.

Nevertheless, the report of that consultation says:

The question was not designed to measure aspects of freedom of expression or sense of belonging, as some of the responses to the consultation suggested.

That will come as a surprise to those that carried out the cognitive testing back in 2015, which found that students understood “community” to mean feeling part of an active and engaged group of students, feeling supported by staff and other students and “feeling a sense of belonging to their course and/or institution”.

If it wasn’t supposed to measure belonging, what was it supposed to do? OfS continues:

We did however find that some respondents were also interpreting the current NSS question on ‘belonging to a learning community’ to be about their wider sense of community. Feedback from students suggested this was particularly true of students from some ethnic groups. Distance learners also poorly understood this question. As such, we have decided to remove this question from the questionnaire.

Is it even possible or wise to disaggregate feelings of community in relation to learning from something “wider”? Shouldn’t we be able to interrogate the “ethnic groups” finding that apparently emerged from a consultation that didn’t actually consult on the issue? Isn’t community now widely believed to be vital to learning success? And how did the issue even come up if you weren’t consulting on it?

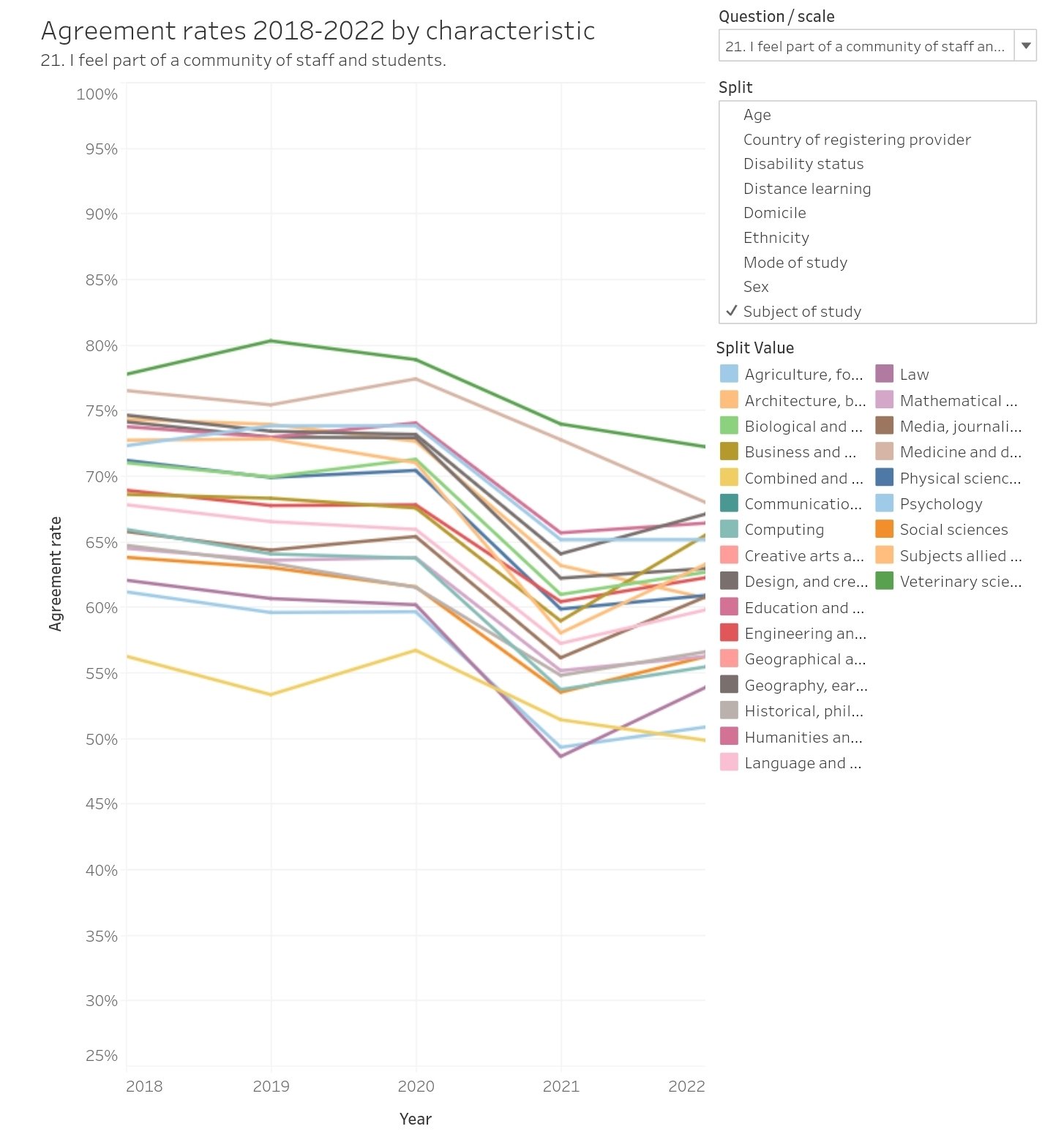

If, nationally, this has been the impact from Covid – wouldn’t we want to be obsessed with turning those lines back upward?

And locally, if you found out that a subject area or student characteristic scored comparatively low on the community question, isn’t that a directly actionable and impactful finding in a sea of otherwise relative headscratchers?

It’s not so much the feeling that the consultation has been a sham. It’s the sense that OfS doesn’t really understand how students learn at all.

The “distance learning” quip is especially vexing. Again, no underpinning evidence is supplied. But OfS was sufficiently worried about part-time students’ sense of community to highlight it as a concern in its 2020 NSS press release. All the research I read suggests that educators should be thinking about ways to generate a sense of community amongst part-time and distance learners, not just ignoring it as a “preference”.

In OfS’ own blended learning review, the expert panel identified significant evidence that students experienced isolation when delivery was online, and spoke of a longer-term impact on their sense of academic community because they had fewer opportunities to meet other students during periods of national lockdown.

It concluded that:

- A course that is predominantly taught through large-scale lectures without providing opportunities for small group teaching would be likely to raise compliance concerns.

- For PGR students, failing to provide opportunities for structured engagement with other researchers would also be likely to cause compliance concerns.

- A course delivered using blended approaches that does not foster collaborative learning among students would be likely to raise compliance concerns in relation to whether it is effectively delivered

And in a case study where a provider talked about MS Teams but students said they were not engaged with each other, OfS said the approach in the case study would raise compliance concerns as the lack of engagement between students on a course and the link to non-continuation suggested that the course may not be “effectively delivered”.

Yet less than two weeks later it’s removing the two questions that would have highlighted those compliance issues!