Only a select few ever enjoy talking about the Transparent Approach to Costing (TRAC). Even for wonks it is often judged too niche to focus on. But maybe now – with Subject FACTS playing a central role in the Augar report – is the right time to get up to speed.

The narrative that providers are drowning in money following the 2012 reforms is a common one – it has bled into the recommendations offered by Augar and the demands of ministers,. But analysis of TRAC(T), and the parallel KPMG study, demonstrates otherwise – providers do get a lot of money but they also do a lot of work. And even though the data that demonstrates there’s no profit from home students is not perfect – there is no data at all that suggests that there is a £1,500 excess.

Where does the idea of an excess come from? Government full economic costing rules mean that institutions were required to allocate a portion of income to a Margin for Sustainability and Investment (MSI) in TRAC – this margin covers parts of the “real” cost of provision that includes things as diverse as maintenance of physical assets, additional student support, and a surplus to account for risk and development.

MIS is calculated in a standardised manner via three years of audited accounts and three years of forecasts signed off by the governing body. It is currently at an average of 9.8% across the whole sector, achieved by the consistent application by providers of these regulations in TRAC. The Financial Sustainability Strategy Group reviewed this process last year – it is working as expected and is not providing results out of line with previous methods

What the panel appears to have done is to assume that the value of MSI is arbitrary and thus ripe for reduction. This is nothing other than wishful thinking and a cavalier disregard of the need to plan for future expenditure, as all business do. There is no data that suggests we can teach most students in any subject for £7,500 without a considerable loss of teaching quality or institutional viability.

But we’ve got a lot of data to get through to back that statement up – so I suggest we start with last week’s TRAC data from the Office for Students.

The OfS report

The recently released 2017-18 OfS annual sector summary and analysis offers an overview of the financial stability of English and Northern Irish HE providers. Rather than looking and profit and loss overall, TRAC uses data on the amount spent by institutions on various activities, and compares it to income related to those activities.

Though one side of this equation is based on self-reported figures, these figures are judged representative enough to contribute to the calculation of the price band threshold that determines what subject provision is offered extra funding from OfS, on top of student fees.

For those happy to go along with the Augar panel’s interpretation of institutional spending – not good news. Providers lose money (on aggregate) on all (UG and PG) publicly funded teaching. And it has got worse over the last year.

Publicly funded teaching incurred a small deficit on a full economic cost basis – meaning that costs exceeded income, with 98.3 per cent of the full economic costs recovered. This is a deterioration from 2016-17, when 99.7 per cent of the full economic costs were recovered.

Research also continues to show a substantial deficit, whereas non-publicly funded teaching (international and professional students, mostly) and other activity (conferencing, commercial activity) produce a surplus – in the former case a substantial surplus – but the sector still remains, overall, in deficit.

We don’t (ever) get details of TRAC by individual institution. You can be sure these figures exist, and that they have played a part in the non-panel component of the post-18 review – work has been undertaken by KPMG (an organisation with a long history of involvement in TRAC – as we detailed in our guide to workload modelling) to inform this.

One important way at looking at differences in this data uses TRAC peer groups. This approach splits the sector into six groups based on 2012-13 data as follows:

- Peer group A: Institutions with a medical school and research income of 20% or more of total income

- Peer group B: All other institutions with research income of 15% or more of total income

- Peer group C: Institutions with a research income of between 5% and 15% of total income

- Peer group D: Institutions with a research income less than 5% of total income and total income greater than £150M

- Peer group E: Institutions with a research income less than 5% of total income and total income less than or equal to £150M

- Peer group F: Specialist music/arts teaching institutions

(The actual institutions in each group are shown via the link above.)

Table 2 in the OfS report shows that for group B (research income greater than 15% of total, no medical school) the average institution makes a very small profit from publically-funded teaching. In all other groups where there is data the average institution makes a small loss, ranging from 0.4% (Group C) to 5.3% (Group E).

It is notable that the median institution in groups A,B, and C either breaks even or makes a small profit – but in groups D and E the median institution makes a loss. This feels counterintuitive at first consideration – surely “research informed teaching” is a more expensive business?

But if you consider that less research-intensive institutions tend to take more students from non-traditional backgrounds, it makes sense. Students that need more support would clearly cost more to teach. To be clear these aren’t massive differences, as we find in research economic cost recovery – it seems everyone is subsidising research, but some more so than others, and less research-intensive institutions are subsidising it the most.

You’re probably now wondering about how reliable this data is. After all it is self-returned, though there is a model governance process at institutional level. Some like to craft to TRAC conspiracies – but you’d have to question the likelihood of every provider conspiring for more than a decade to go against TRAC guidelines and pretend that teaching is more expensive than it actually is?

Augar commissioned two new reports in this topic – an ad-hoc briefing on TRAC(T) from the DfE and a detailed extension of the TRAC methodology based on additional data provided by a small sample of 40 providers from KPMG. Let’s take a look at each.

The ad-hoc briefing

Starting with a neat definition of TRAC “historic expenditure information plus a sustainability cost adjustment”, the ad hoc DfE report ranks HESA subject cost centres by the percentage change in Subject-FACTs (the Full Average subject-related Cost of Teaching an OfS fundable FTE student in a HESA academic cost centre) for all fundable students. You’ll see the table on page 74 of the full report.

Subject-FACTs are more usually used in calculating average subject premiums, to inform the allocation of OfS funding linked to high-cost subjects. To say that this is not an exact science is perhaps understating the indicative nature of these statistics – DfE really don’t help things by presenting the percentage change between 2011 and 2017 without taking into account either the change in academic cost centres in 2012 or the overall percentage change in spending per student for all subjects, or even student volume changes between subjects. The report notes the first issue, but not the others.

There has been a lot of money coming into the sector post-2012. If this money has been spent on improving the provision and support for students then it is difficult to complain. What else would we expect providers to do with student-related funding? Over time there has been speculation that it would be spent on anything from swanky en suite accommodation, to architecturally stunning buildings, to golden chairs for vice chancellors. This data suggests, in the main, it hasn’t.

Indeed – the average per student spend in all but two subject areas is greater than the current Home/EU fee cap (if you take the average including waivers of £9,112). Though it’s not there in black and white, the major cross-subsidy still comes from international students.

The canonically expensive courses fare less well under the current model – clinical, hard sciences, and engineering course generally run at a loss even despite the OfS top up. The report adds back in the non-subject specific activity relating to widening access and participation to make this calculation, with both OfS allocations and spending on bursaries or hardship funds added back in. Even this likely understates the gap between funding and the cost of provision as the average fee income per FTE used as an approximation here is greater than the average fee income actually paid per FTE after waivers and mid-year drop outs are taken into account.

The KPMG costing study

TRAC(T) clearly leaves us with a lot of unanswered questions – so a parallel KPMG report uses a linked but distinct methodology to get even deeper under the bonnet of the cost of undergraduate provision. Here, 40 English HE providers agreed to participate (actually 41, but one didn’t provide good enough data) in an experimental data collection exercise for 2016-17 that took TRAC as a starting point. The data covers 38 per cent of all possible student FTEs. KPMG has been careful not to name individual HEIs, so in many cases the data presented is not everything that was collected.

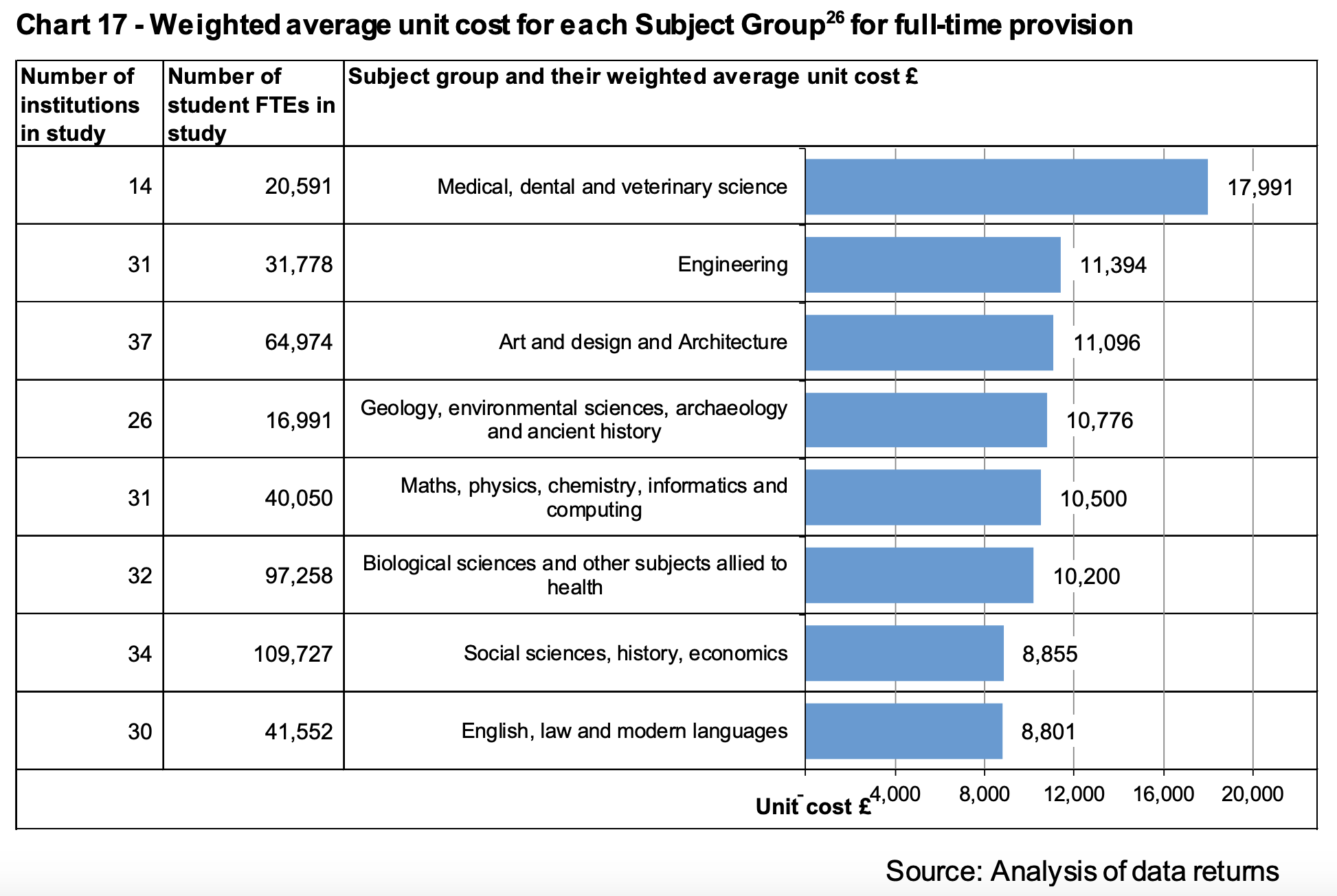

The weighted average costs here differ slightly from the raw TRAC(T) numbers presented by OfS – social studies, languages, English, and Law now slightly undershoot the average fee income per FTE. Splitting these averages down by cost category does not tell us much – fee income, as expected, covers course delivery staff costs, student related central services (admissions, or libraries for instance), departmental running costs (managing things like examinations and assessment processes) and estates costs (the equipment and spaces that allow teaching to happen) as the main constituent parts.

Chart 17 (p80) highlights the difference by subject area for full-time undergraduate provision, and Chart 18 (p88) breaks it down in more detail. You’ll struggle to find any subject with an average delivery cost of less than £8,000 – economics comes closest at £8,021. This suggests a few providers, at least, may be able to get the costs down to Augar’s magic air-plucked number – but it is hardly a resounding condemnation of current teaching costs.

What is interesting is the way these proportions vary according to the way a provider is organised. As with TRACT we look at “peer groups” – and find that less research-intensive institutions have larger direct staff costs and smaller departmental costs. The report hints that less research activity means that less research income means that staff spend more time teaching (and, I suspect administrating) in these providers.

So the differences look to be all about accounting decisions and allocations across research and teaching rather than any fundamental structural differences. Very small providers tended to do more at a departmental and less at a central level, but this is the only finding in the other direction. For mainstream providers smaller institutions (and, from my experience, former polytechnics) tend to control spending more centrally.

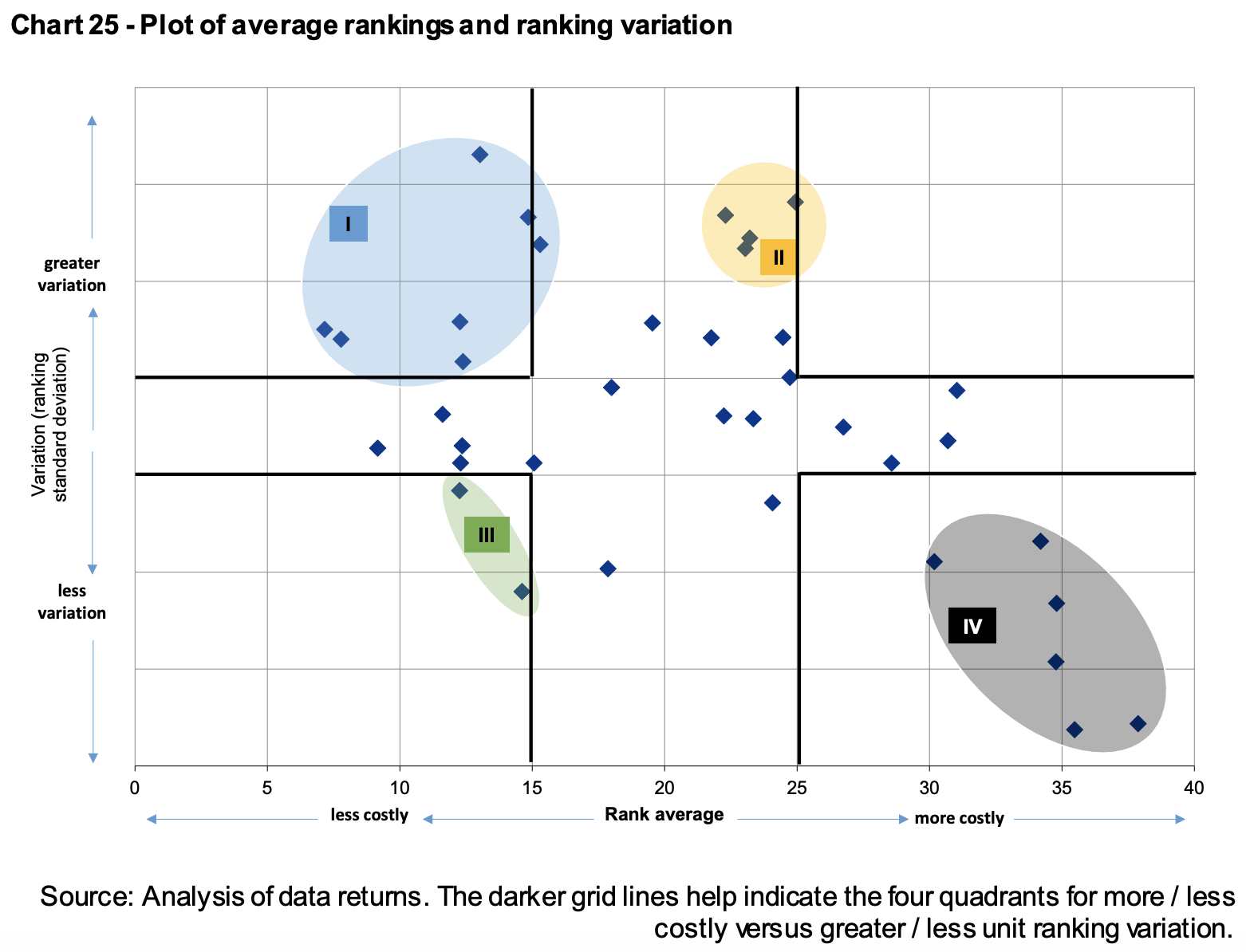

Chart 25 is one to give finance managers palpitations. There are groups of institutions that cost more than others, and groups with greater variation between subjects than others. Those with greater costs overall and less variation between tended to be in London and less specialised – those with greater costs (though not the most costly by some way) and more variations between subjects were four institutions with very little in common other than a staff-student ratio between 15:1 and 30:1 and a situation in the geographic south.

Overall smaller (correlation +0.98) and more specialist (+0.86) institutions, those with a low staff/student ratio, and those in London tended to have a higher unit cost. There’s no conclusive evidence with any link to non-continuation rates, or the size of a provider estate. Institutions cite inflation as the primary factor contributing to increasing costs since 2016-17.

The KPMG summary puts the situation rather well: “we found that institutions are diverse and complex which leads to a variation of costs of teaching within subject groups. Institutions reported increasing challenges to maintaining financial stability due to volatile student demand, balanced against a desire to invest in staff to lower the student figure in the staff to student ratio, IT, and infrastructure, which are seen as enabling increased quality and attractiveness of the institution to students.”

There’s not a smoking gun here on systemic overspending – and even the limitations of the study (time pressures meant responses have not been audited or verified, limited contextual information was gathered) do not point to systemic gaming of the system. Though this is far from perfect data, it is suitable for the kind of broad-brush indicative conclusions reached here.

The panel responds

The independent panel base a lot of their conclusions drawn from this report on the average margin for sustainability and investment (MSI) of 10 per cent. This component of full economic costing covers investment in the maintenance of assets, additional support for students, and accounting for risk, development, and finance costs. The principle of this calculation was accepted as a core component of TRAC when it was introduced, and the current approach is based on current Ministry of Defence guidance for full economic costing. The panel contests this, for no other reason than their own gut feeling that it looks a bit high.

The other strand of the argument draws on that TRAC(T) ranking – that shows spending has increased to encompass available money, showing some subject cost centres rising at substantially higher rates than others. The panel accept that

HEIs are indeed spending their additional income for these subjects in order to demonstrate value for the higher fee level charged, or the allocation of costs to different subjects in the TRAC data is relatively loose

but don’t see either explanation as satisfactory.

So if TRAC(T) doesn’t show a loose allocation of costs to subject areas (it does), and institutions are not spending this extra teaching income on better teaching (as it appears they are) how do they explain where the money is going? I emphasise that two government standards-based reports suggest providers are running at a loss for both undergraduate and all students.

The panel argues that because there is a wide variation within subject areas, and central costs, between institutions (there is, for the structural and regional issues described above) there must be scope for “best practice” efficiency savings. Maybe they are suggesting that the Royal College of Music should teach more chemistry courses, or that King’s College London should base itself in Hartlepool? There’s an expectation that “this assessment will be contested within the sector”.

This analysis – although based on great research – is thin stuff to base a fee cut on. By the time we get to the “case for change” the concerns about efficiency and the MSI have transmuted into the “fact” that some subjects are overfunded. Coupling this with the fact that careers (like say nursing, teaching, social work, the arts) with low pay mean that for some subjects students will be unlikely to pay back the full cost of their loans. Out of this swirl of data comes the assertion that the country is subsidising some subjects of study to the detriment of others.

In passing, I note that the subsidy is to employers who are employing graduates on low wages, not to students who would be delighted to earn a fair wage for the job they love, or to institutions who – post-2012 – follow student demand. The fact that many of these employers are in the public sector I leave to one side.

I’ve long argued for more strategic use of HE funding – right back during the Browne review I was calling for the OfS to make public good investments into subjects of interest for economic, social, or capacity needs. Augar calls on the OfS to review funding rates in more detail than the two overviews we currently have, while also taking account of economic and social value. This kind of feels like the sort of thing that should be done before rather than after decisions are made about funding levels.

The shift to £7,500 does give the government more space to make strategic choices about subjects – an envelope of roughly £3,000m. But the idea that some subject should receive no more than the £7,500 fee income suggests a certain amount of naivety about precisely where £1,500 (on average) of external income is going to come from. There are probably some efficiency savings available through the adoption of best practice(though government reports always overestimate these), and the idea that institutions ignore MSI and assume that the OfS will bail them out of financial trouble is unwise, to say the very least.

For a report praised for a focus on detail the central plank of the reforms feels like post-hoc justification for a political decision. There is no data that suggests most courses can be delivered at the current levels of quality on £7,500 – unfortunately, it is now the job of the OfS to go and find some.

Meanwhile, HEIs continue with no inflationary income increases to combat cost inflation, leading to more “efficiencies” (which many would say have dried up), and with no end in sight to what could become a decade of gradual austerity.

Is it not really rather simple?

In the R-led Us the vast profit on International Student UGs subs R where R fails miserably to recover its overhead costs on R grants/contracts – hence the growth of such student numbers. That subsidy is added to if the U can also turn a profit on its trading operations. With respect to UK/EU T income, for political reasons we have long shown it as all spent on T – but in practice there is a hidden indirect subsidy to R by way of academic staff time funded from T that is ‘stolen’ and transferred to R. While R is the key factor in the metrics that drive the PIs and hence brand-value for a U (which in turn dictates the ability to recruit Intl St fee-payers) and also while R is the sole element that drives career success for an academic, this state of affairs is not going to change – even if a few R Us with a Bronze in the TEF are being shamed into addressing to some degree their neglect of T while chasing the kudos of R. And this distortion is added to where a R-Uni has borrowed heavily to spend on more buildings into which to shove yet more under-funded R activity, leaving the T income to cover a bigger gap on FEC and also now the interest charges on the bond debt – while probably the buildings are over-cost!

As for the R-lite Us, they at least in the main have not indulged in a debt binge; nor are they subbing massive R activity – not least since they generally can’t recruit as many Intl St fee-payers. Whether their UGs get more T because their academics do less R is unknown, but perhaps they do? – and need it, deserve it?

All in all, it is a hell of an indictment of the HE industry that we have not really got a clue about what it costs to deliver effective UG T as our core activity – and still less of a clue as to how to achieve any productivity gains (last seen in HE when Gutenberg invented printing 500 years or so ago) via an increased understanding of how the brain learns and of how to begin to measure learning-gain, apply AI teaching, etc. Time for EasyU to enter the marketplace? One hopes that other areas on which the taxpayer funds huge amounts of GDP such as schools and hospitals go about their business more efficiently…

I comment in a purely personal capacity and am not in any way representing OfS views as a Board Member.

Can Wonkhe invite David to write this comment up as a full article?

It is always possible to analyse the costs of T and R, but it may not always be meaningful, since T and R are a classic example of joint outputs achieved from a shared set of resources.Those with long memories may recall arguments about the ‘unit of resource’ in the 1980s. Christopher Ball and John Bevan at the National Advisory Body for Local Authority/Public Sector HE ran an effective campaign for more money, which embarrassed the universities and the UGC under Peter Swinnerton-Dyer. The UGC of course argued that their unit of resource was much higher because they also did research, which led more or less directly to the (catastrophically misguided but politically convenient, then and now) splitting of funding for T and R. Politics dictated that the universities’ unit of resource for T should turn out to be just a bit lower than the polytechnics’ unit of resource, and by default this fixed the size of the pot for R (= total UGC funding minus T). Although TRAC has the merit of consistency and completeness it was ultimately erected on these shaky foundations, and like all cost analyses it involves many debatable choices about how to apportion things, even if some of the choices are buried deep and long forgotten. Contrary to David P’s Gutenberg quip, there were significant ‘efficiency gains’ in the 1980s as the polytechnics responded enthusiastically to rising demand by expanding rapidly at much less than average cost, while the universities for a long time refused to expand at the same rate. Bevan and Ball managed to disguise the equivalent embarrassment of large differences in the unit of resource between polytechnics and colleges by inventing a ‘sub-quantum approach’ allowing much higher units of resource for the former than the latter.

The question is always: who wants to know the cost, and why? David K suggests Augar wanted to know the cost of teaching because he wanted to reduce the sticker price, as the Americans would say. It’s hard to disagree.