“Consumer crackdown on ‘Mickey Mouse’ courses by showing future prospects”

Excited Daily Mail story on ‘Mickey Mouse’ courses:

Degree courses will be rated for teaching quality, salary prospects, tuition time and value for money under plans to unleash ‘consumer power’ on universities.

Poor quality ‘Mickey Mouse’ courses will be exposed on a website – similar to those used to select car insurance or electricity – allowing potential students to compare them.

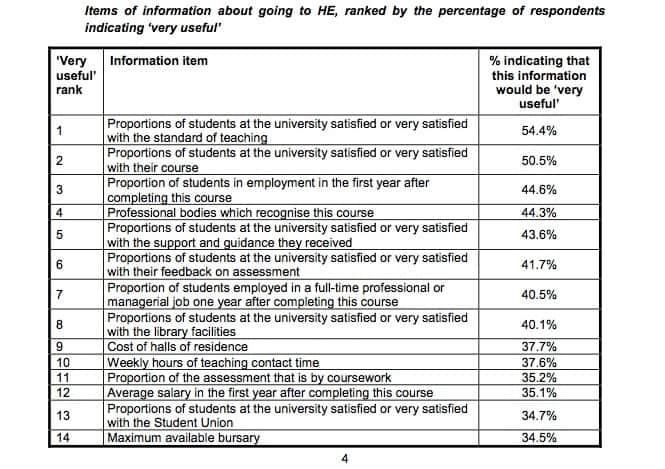

The 16 statistics students most want to know about courses before making their applications were revealed in a report published yesterday by England’s higher education funding quango.

They include the proportion of graduates employed in professional or managerial jobs, their average salary, the quality of teaching on the course, weekly hours of teaching time and the quality of library and IT facilities.

All measures should be published ‘as a minimum’ for each degree course in the country in a web-based format that will allow comparisons, the report said.

A range of very different courses is helpfully compared:

Presumably the Mail expects that some of these courses would disappear if potential students were aware of this data.

The report in question, Understanding the information needs of users of public information about higher education, a report to HEFCE by Oakleigh Consulting and Staffordshire University, is available from HEFCE and is somewhat more sober than the Mail article would suggest.

It lists the top items of information potential students would wish to know about a university or course:

(The final two not listed above are the ‘Proportions of students at the university satisfied or very satisfied with the IT facilities’ and the ‘Maximum household income for eligibility for a bursary’.)

Essentially, it is argued that this data needs to be published on a consistent basis for every institution and course and this will help inform decision making. But all of the information is available at present, in one way or another, albeit not always in the most accessible form. And it seems, according to the HEFCE report, that prospective students, whilst they would like to have the data, simply aren’t prepared to look for it:

Less than half the sample had tried to look for 11 out of the 16 most highly ranked items. This is partly explained by participants’ estimate of the usefulness of the information. Those who rated the information ‘very useful’ were much more likely to look for it. However, a surprisingly large proportion (between a quarter and a half) of participants who rated items ‘very useful’ reported that they had not tried to find the information. A maximum of two-thirds of these reported that they had tried to look for information on student satisfaction and employability data. One possible explanation is that prospective students were unaware that these data might be accessible.

Another possible explanation is that the demand for information, and the need for a ‘consumer crackdown’ is somewhat overstated.

There’s an interesting article (also about “Mickey Mouse degrees”) here:

http://www.telegraph.co.uk/education/universityeducation/7981792/Quango-opposes-crackdown-on-Mickey-Mouse-degrees.html