One of the most-pressing challenges facing the UK higher education sector is how it responds to the increasing prevalence of mental health conditions amongst students.

Both official statistics and student surveys show often dramatic increases in incidences of mental health conditions. Anxieties have been further raised due to incidences of student suicide. Whilst these remain low and relatively stable compared to previous decades (Office for National Statistics, 2018), each incidence is one too many.

A range of organizations have conducted research and produced practical guidance (Student Minds, UUK, Institute for Public Policy Research). However, significant challenges remain, particularly around resourcing student support at the start of a crisis, or at other times where it can be most effective. Finding the balance between supporting and ‘infantilizing’ students is also a real consideration.

Setting aside arguments around the appropriateness of proactively seeking to support students, this piece explores the question of whether or not existing learning analytics resources (perhaps with some modification) could be used to identify students most in need of mental health support at a time that is most likely to lead to successful outcomes.

Learning Analytics

Nottingham Trent University has embedded learning analytics into institutional practice to help students manage their own success, to help staff support them, to improve student/staff working relationships and to improve institutional insights into the student experience.

The Dashboard generates daily engagement ratings based only on a student’s learning interactions with university resources, such as the library or online tools, avoiding more contentious areas such as socio-economic background. In 2016/17 (the year used for this analysis) the engagement ratings were ‘Low’, ‘Partial’, ‘Good’, and ‘High’.

Engagement data is generated and displayed to both students and relevant university staff, alongside other contextual information, using Solutionpath’s StREAM tool. If a student has no engagement for 14 days the Dashboard sends tutors an email asking them to make contact with the student. As might be expected, there is a strong correlation between overall patterns of engagement and student success. Students with high average engagement are far more likely to progress and achieve higher grades than more lowly engaged peers, and the ‘no engagement’ alerts are a strong early indicator that a student is at risk of non-favourable outcomes.

Daily data can act as an early warning based on either low engagement or unexpected changes in engagement behaviour. Further to supporting the needs of the whole student population, we posit there is particular potential to provide support to students with mental health conditions at the point when a mental health incident may be starting.

Can ‘engagement’ act as a proxy?

At NTU, the majority of students with mental health conditions progress from the first to second year. For example, in 2016/17, 82% of NTU first years with mental health conditions progressed compared with 84% of first year students with no reported disabilities. However, there are differences in engagement patterns between the two groups from the very start of the year. For example,

- In the first term, a lower proportion of students with reported mental health conditions had ‘Good’ or ‘High’ engagement than their peers with no reported disability (63% and 74% of students respectively)

- A higher proportion of first year students with reported mental health conditions generated 14-day no-engagement alerts than their peers with their peers with no reported disability (7% and 4% of students respectively)

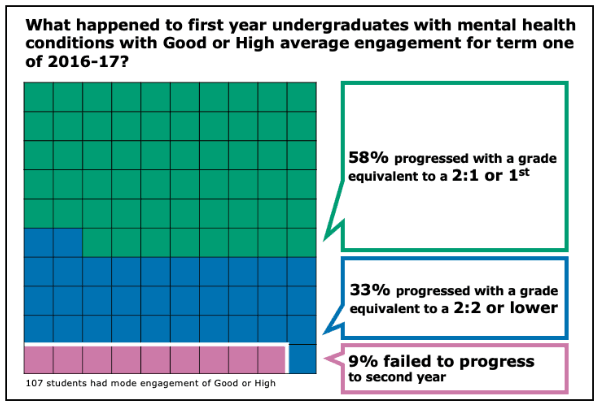

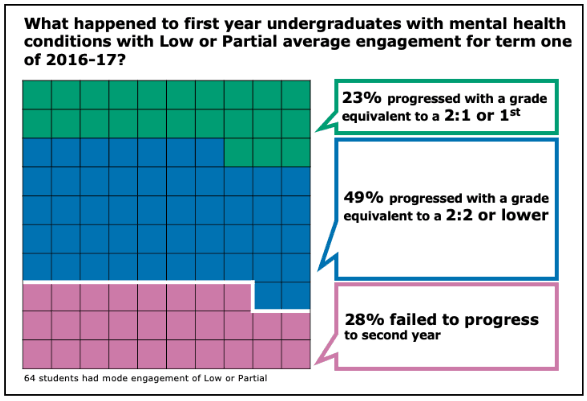

The data demonstrated that students with mental health conditions are less likely to be highly engaged with their studies. This is important because engagement is such a strong predictor of the likelihood of success:

- First years with mental health conditions and ‘Good’/’High’ Average engagement = 91% progression

- First years with mental health conditions and ‘Low/’Partial’ Average engagement = 72% progression

This 19% difference dwarfs the 2% progression gap between students with mental health conditions and their peers with no disabilities. Importantly, engagement data has the potential to target support to students who are most in need during a particular period of time, and to do some more effectively than using solely group characteristics.

Insights to act on

Institutions need to develop strategies for supporting students with mental health at the point where they need it most. A range of tools already exist based on real world interaction, including tutor relationships with students and campaigns encouraging students to look out for their friends. Nonetheless, learning analytics gives a different set of insights to be used alongside existing options to spot students with mental health conditions at a point where they most need help.

Average engagement is perhaps most useful to provide contextual information to support tutors’ observations or improve the quality of a tutorial conversation. The ‘no engagement’ alerts appear to work as an effective early warning system at the point when a student with mental health conditions may be in most need of support. Clearly, challenges remain about the most effective way of supporting those students, but we feel there is potential to be explored.

However, not all students with good engagement and attainment do so without affecting their mental health. More still needs to be done to identifying over engaging students who are working all hours just to scrap a pass and those that are achieving and pushing too hard. Maladjusted perfectionism is correlated unfortunately with student suicides, so we must do more to be able to also identify this group early on.

What is the comparison data for students without a declared mental health condition? Is there a difference within each average engagement band between those with and without declared mental health conditions?

Yes, that is true – it is some years ago now, but when I finally admitted I was struggling to catch up after a lecturer had been off sick and the timetable was disrupted, my personal tutor’s response was, “Well, you’re getting good marks in the coursework, aren’t you?” Fortunately, the lesson I learned from that was to stop trying too hard so the stress decreased and I was OK – my marks dropped but I still did ‘well enough’. I can imagine I wouldn’t have been the only one in that sort of situation…

Thanks for the article. What triggers the flag being raised on whether or not a student has a reported mental health issue (e.g. discussion with University counselling service?), and does the flag stay switched on until the student reports that they are feeling better?

I’d also be interested in the answer to Huw’s question – is the trigger formal engagement with student disability services (for example) or are more informal reports such as conversations with a tutor or student support officer captured?

How are students informed of the learning analytics data being gathered, and are you able to interrogate the system to explain why someone has been characterised as having good / high / partial engagement? This seems necessary to allow the student to really understand what is leading to their ‘rating’ and what actions, for them as an individual, may be required. There will presumably be cases of ‘partial’ attendance that don’t actually need action – due to a student’s particular circumstances / studying habits.

Thanks for the reply

I agree that there are risks of unnecessarily stressing students through our learning, teaching and assessment strategies and communications. However, there are students with mental health conditions with all types of engagement, not just those working unnecessarily hard. Our work started from the perspective of the group with the greatest risk of dropping out, and that group is students with the lowest engagement. Furthermore, low engaged students are also the group who appear least likely to access our services and seek help from student support services and other professionals.

This was a relatively short blog piece about whether or not LA could play a role by providing timely information for staff. I did try to stress in the conclusion that this is only one of a range of tools and strategies for institutions to consider. I hope I was clear, this is intended to inform staff/ student interactions, not act as an independent alternative.

At present, the tool cannot identify a student who is highly engaged and stressed and one who is highly engaged and is not. Part of the purpose of the tool is to provide tutors with contextual information for personal tutorial discussions. There is, of course, more work to be done in this field, but we encourage staff to discuss engagement with students in the context of coursework and feedback. I’d argue that a student with very high engagement and lots of failed coursework would benefit from a different tutorial conversation to one with low engagement and lots of fails. The Dashboard provides tutors with that context.

We’ll continue working in this area, debating approaches and hopefully coming up with solutions that students and staff will find useful.

Best wishes

Ah – sorry – I didn’t realise this would appear at the end of the queue.

So thanks Sam

I’ll start on the other replies next and will obviously reply by name

Ed

Hello Mick

The outcomes based on engagement bands is very similar for those with and without reported mental health conditions. The comparison data for the infographics are as follows:

Students with no reported disability and good or high average engagement in term one:

– 11% failed to progress

– 36% progressed with a grade equivalent to a 2:2 or lower

– 53% progressed with a grade equivalent to a 2:1 or first

Students with no reported disability and low or partial average engagement in term one:

– 27% failed to progress

– 48% progressed with a grade equivalent to a 2:2 or lower

– 25% progressed with a grade equivalent to a 2:1 or first

The biggest difference is therefore in the proportion of students with good or high engagement who progress with a 2:1 or first; 58% of students with reported mental health conditions compared with 53% of students with no reported mental health conditions. I don’t feel that I can confidently explain this difference: it may be just that the sample sizes are very different, or due to factors such as the excellent support from Student Support Services (okay, we’ll go with the excellent support …).

Hello Sasha

Thanks for the insight. Learning analytics predictions by definition work well when we are looking at big data sets. The real challenge though is how we empower tutors to support individual students using such high level data. I think that this will remain a significant challenge due to pressures of time, approaches to communication etc., but we’ll keep plugging away at it

Best wishes

Ed

Hello Huw & Kate

For this analysis, the flag was based on whether or not a student had reported having a mental health condition in their self-declared disability data upon entry. This is stored in the University’s student records system. So it’s therefore an absolute benchmark, we won’t have picked up students who expressed different levels of mental ill health to their tutors. It’s therefore likely that there will be a small number of first year students in the ‘no disabilities’ group who will have been encountering some form of mental ill health.

We inform students about the Dashboard in our prospectus, pre-entry material, during induction, online and for the first time this year our student mentors will formally play a role. Probably the most comprehensive student-facing page is https://www.ntu.ac.uk/c/censce/opportunities-for-students/dashboard

The data used to calculate engagement is clearly presented to students within the tool; each individual resource usage is plotted graphically and presented in a table, so students know what data sources we use and can clearly see the association between activity and engagement. The algorithm itself is not detailed to students, for a number of reasons:

· Students learn best in different ways; students should focus on how they learn and engage best in real life. We do not want to be overly prescriptive about how students should engage with their studies (for example, suggesting that students ought to read 4 more books).

· We feel that students are more likely to ‘game’ the system to appear more engaged if detailed information about the algorithm is provided. The goal is for students to be more engaged rather than appear more engaged, and we don’t want to encourage student to spend time on such pursuits.

Staff and students are encouraged to take a considered approach to the Dashboard and to appreciate its limitations, for example, the following is an extract from the Dashboard FAQ section:

“Engagement has been proven to be a good indicator of success. The higher a student’s average engagement rating the more likely they are to progress to the next year, or graduate with a higher degree classification. That said, the association between engagement and success will never be perfect, it is a useful guide only. There are factors that the Dashboard cannot measure such as buying text books and studying at home. It should not be used to predict an individual student’s success, however it can still provide a good indication of how a student is doing and can be used as a platform for conversation.”

Both staff and student users are also encouraged to use context specific knowledge, for example, if there has been a reading week engagement is likely to be lower.

As you say, there will be lots of students with partial engagement who don’t need action taking. I think that what we were trying to show in the post is that average engagement is a useful snapshot about the student, but that the ‘no engagement’ alert is probably a better trigger for immediate action. I think that unless a tutor knows the specific reason that a student has no engagement for the last 14-days, we’d always advise them to make contact with irrespective of what their normal engagement looks like.

Best wishes

Thanks Ed, that’s really interesting!