Many of us enjoy developing novel data-derived perspectives on universities (for instance in popular podcast segments), but I would never have counted Julia Buckingham among our number.

The Chair of Universities UK suggested a number of “alternative” ways to measure the value of a university degree beyond the current governmental favourite of LEO salary data. The idea of other benefit mechanisms is generally relegated to the bottom of DfE press releases, where the minister of the moment generally offers a quote along the lines of HE bringing happiness, health, love, and/or laughter.

All good stuff, but not the kind of measurable benefits that drive policy and league table perspectives. There have been some attempts (notably the UK Progressive University Ranking – UKPUR – which focuses on leadership and diversity) to reliably rank universities using alternative priorities – but I’ve not seen one focused on alternative outcomes-based measures.

Unmeasurable metrics

Buckingham’s speech offers a few suggested metrics. Unfortunately, a few of these are not suitable provider level discriminators:

- What proportion of graduates from a course work in essential public services such as the NHS and in teaching – and how many do we need going forward?

- How likely are they to work at the cutting edge of technology and innovation?

- How likely are graduates to enrich the culture of the UK through their occupations?

- How many will take action on environmental and societal challenges and make a difference?

I’d argue all of these are subject mix measures, not outcomes measures. Graduates in social work, nursing, or medicine are more likely to enter the public sector. IT and engineering graduates are more likely to have careers focused on technology and innovation. Environmental scientists are more likely to take action with a positive impact on the environment. And for the non-subject exceptions, there is little reliable data on an institutional (much less an institution and subject) level – DLHE and Graduate Outcomes are survey instruments and the historic reluctance to release provider data on employment sector questions suggests a lack of confidence in data quality.

These aren’t exclusive domains, of course, but the other problem with likelihood measures is – how would you tell? A person’s career and achievements only really make sense to analyse as they get older. Imagine a law graduate who spends 15 years in commercial law and then leaves to found a charity. Or a classics graduate who eventually (imagine!) becomes Energy Minister.

There’s no reliable measure of eventual graduate potential for impact – and unless you get undergrads to write pathways to impact statements about their career choices there never will be. And this predictive fallacy is one of many reasons why salary measures aren’t a great choice either.

Data intensifies

But on to the suggestions we can work with. There’s essentially four metrics here – because of the variability in what and who this data measures I’m not going to attempt to match cohorts, and I’m going to take single year (latest relevant) samples.

First up:

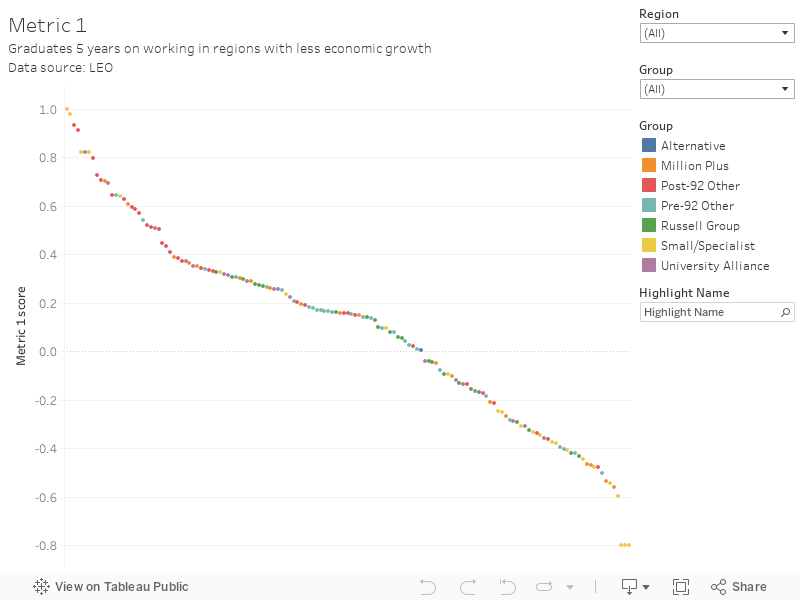

Metric 1: How many graduates alleviate skills shortages by working in regions with relatively lower growth?

I’m going to take the source data from the most recent LEO release, which examines provider and region only. As I said at the time there are problems with this data (not least the absence of a split by sex) but because I’m looking at rounded graduate numbers rather than salary levels this becomes less important. The other data source is the 2017 ONS Gross Value Added (GVA) per head data for each region – and I’m excluding graduates in the provider’s own region, and not working in the UK.

What this gives me is a measure of the likelihood of graduates to move to and work in a region with a lower than average rate of economic growth.

Methodology in detail: I’ve taken the inverted percentage point distance from the 2017 UK average gross value added for each region (NUTS1 geographic regions – I’m not trying to say Wales is a region, Emma!) and for each institution multiplied the percentage of graduates in each region in 2017 five years after graduation (LEO) by this distance. The exceptions are the region of the provider – which I’ve counted as simply the national average, and graduates abroad, or with missing data – which are excluded from the calculation. This leaves me with a single figure ranking. You could argue that I should be using time relevant GVA figures, and that I should allocate at least something for students in the provider’s own region, for reasons of speed and fairness I have chosen not to).

Of the four metrics, this is the most satisfying one as I think it comes closest to accurately measuring what is proposed – and it’s a look at the sector as we’ve not seen it before.

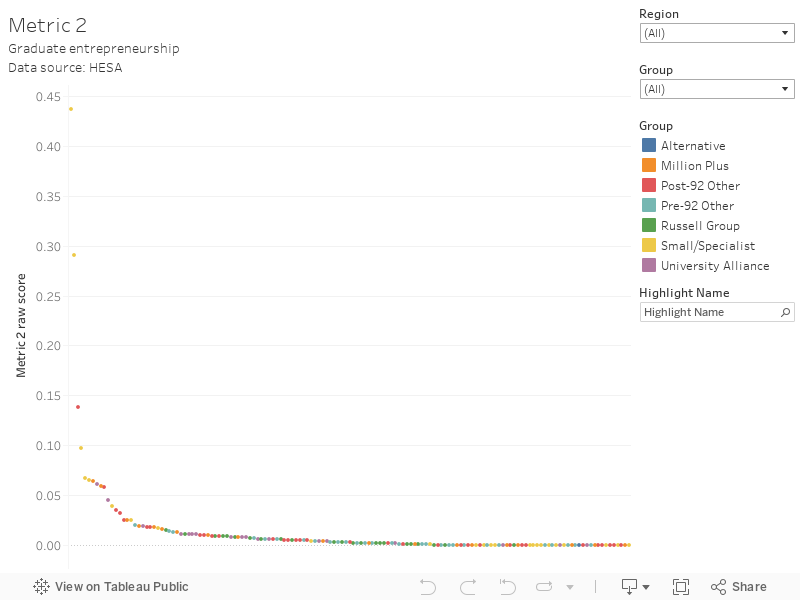

Metric 2: And how likely are they to be entrepreneurial and be business owners?

This is very much a proxy, but I’ve taken HESA HE-BCI data on graduate start-ups from the last available year of data (2017-18) divided by the number of first degree graduates from the same year. The thing to note is that this measure only includes start-ups where there has been some formal support from the provider. This gives me a reasonable measure of likelihood (if more graduates are starting companies with support then probably more are without) but the data is very rough.

This is plainly a proxy and is far from satisfying as an answer to the question. It is possible that future iterations of the graduate outcomes survey may give us a version of this information – but graduate outcomes is a survey instrument not population data.

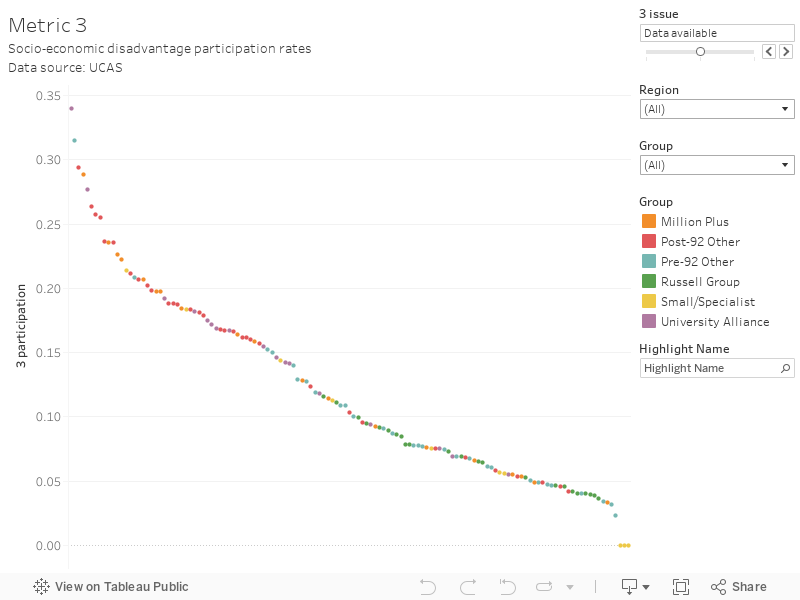

Metric 3: How many are from deprived socio-economic backgrounds?

You’d think this would be an easy question to answer, but if you want to look UK wide you are wrong. For all the noise that UCAS make about their Multiple Equality Measure (MEM) they don’t actually publish public intake by MEM quintile by provider. The various xIMDs are not directly comparable, and you’d struggle to find them by provider (they are in TEF for England, but not other nations). So I’m reduced to using POLAR4 data for the most recent year (2019) of UCAS intake. For Scotland in particular this is rubbish, due to the well-known failure to include admissions via Scottish FE colleges. And we don’t get data for many smaller providers across the whole UK.

Seriously – data like this should be easier to come by on a provider level for the UK. If senior sector leaders take nothing else away from this article, they should be clear about the need for UK wide data on students from deprived socio-economic background. Many agencies act as if they hold this data – they do not.

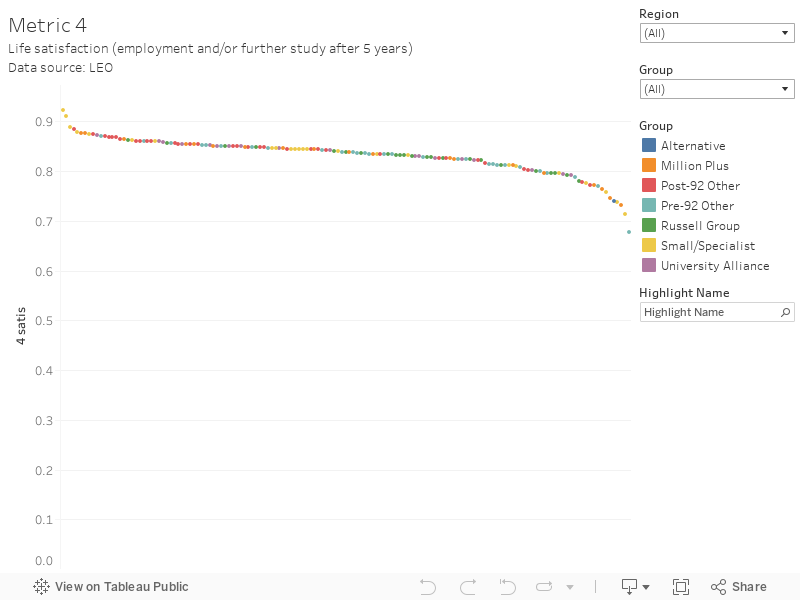

Metric 4: And what wider benefits are there to individuals’ life satisfaction, their contribution to their community and their personal health?

What a question! I’m going to – against my better judgement – use the proportion of those in employment or further study 5 years out from graduation. I can already hear the chorus of disapproval, so let me explain my reasons.

Broadly people are more satisfied when they are doing stuff. This could be studying, or a job – but it could also be a number of other personal projects (anything from volunteering, to full-time parenthood, to choosing early retirement to learn early baroque harpsichord music). Of course, many jobs don’t give this level of healthy satisfaction – writing press releases about the war on free speech on campus springs, unbidden, to mind as a good example – but I am going to assume that the percentage of people thoroughly miserable at work and the percentage that have achieved non-work, non-study, delight cancel out. I know this isn’t great, but it is the best data we have. I’ve used the same 5 years out, 2010/2011 academic year data as for metric 1.

It is difficult to imagine anything other than a proxy measure here. Satisfaction can only realistically be measured by a survey, with all the data quality issues that brings.

Bringing it all together

I’ve calculated deciles for each metric (KEF-style) and summed the four – giving a maximum combined score of 40 and allowing me to do a single measure ranking. And here it is.

I’m not making any claims as to the usefulness of this new metric – it’s probably worse than any existing alternative. Certainly, for smaller providers not included in the UCAS data it is actively harmful (these are omitted from the final ranking but you can add them in via the 3 issue filter). I think metric 1 is interesting in terms of graduates working in regions with less strong growth, but there are room for refinements – most notably to factor in the overarching economic state of each region.

Ranking, or measurement more generally, is not for the faint-hearted. There will be valid criticisms of UUK’s proposed metrics, and of the way I’ve implemented them using existing data. If we want a new value proposition for higher education outcomes we need to start with rethinking the sector’s data architecture. My purpose in walking you through this process is to show you how many fudges and assumptions get baked into even the noblest attempt at a ranking. If Universities UK and the sector are serious about coming up with an alternate output measure the first step would have to be to commission some new data collection.

Join us at The Secret Life of Students in March where we’ll look to the future of the TEF. Booking open now.