We all know the cycle of student feedback.

Representatives deliver it to staff, staff take actions from those points they deem most important, and the feedback itself isn’t looked at again until the next SSLC, a whole semester later. Why does everything take so long? What about all those comments that contain other feedback that aren’t in the power of the department to resolve? And how can we ensure that student feedback is not lost in the cycle of committee meetings – but actively sought after? Our student rep team at ARU Students’ Union set about fixing some of these issues.

Our new approach to feedback collection and student data evaluation allows us to work in closer partnership with the university. It helps us to evaluate student feedback systematically, and provide a tailored approach to student representation within our faculties. It strengthens the impact of the student voice, and provides faster, more visible outcomes to students.

We can better empower our elected officers to spearhead student-led change, as they will have a vast amount of student feedback data to reinforce their campaigns. And we can help to solve the problem of student feedback being misconstrued upon its delivery by our student representatives, by evaluating it in a way that works for everyone involved.

Looking at the process

When we looked at the situation, academic staff were missing out on by solely focusing on the actions in SSLCs – it turns out, a lot of really valuable information. Taking what we had, the rep coordinators decided we had to find a way to make it easier for the faculties to see the wood for the trees. So qualitative analysis themes and deliverable papers were born, giving us a new approach to analysing data from SSLCs.

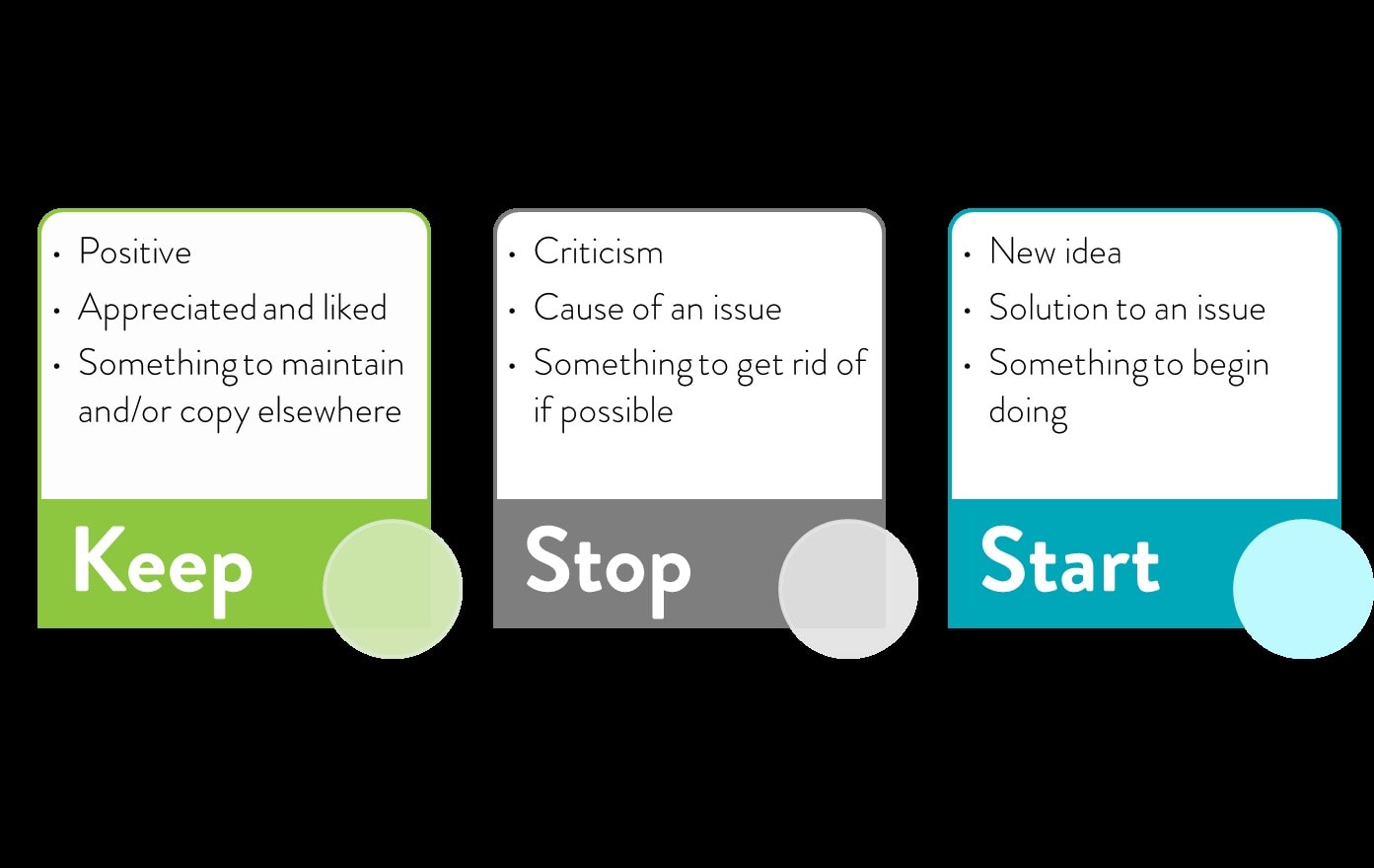

We have always encouraged our reps to arrange their comments into the categories of Keep, Stop, and Start, based on what the feedback had to say about the student experience – was it something students liked? Was it something they wanted to get rid of? Was it a new idea entirely?

Once the student reps had delivered their feedback, basing it on these basic themes, the rep coordinators were able to distil the feedback into more specific subjects and sub-themes, thereby making it easier to create our deliverable papers for the faculty to use.

These papers are based on over 1500 comments made by student representatives in their SSLC meetings – not just the comments that could be actioned and dealt with immediately, but even the smaller and more mundane issues which, despite their nature, can make a big difference to the student experience when they are repetitive or applicable across the wider student body. Even issues to do with administrative problems, like the lack of invites to SSLCs or the timing of the meetings themselves, were analysed, in order to point out how these could be improved to provide a better quality of student engagement.

The papers are then submitted to relevant faculty and university-wide committees, and our officers are fully briefed to present the key themes and recommendations. The lists of recommendations, reinforced by qualitative student feedback, were a really powerful tool and resulted in a number of impressive changes to the university, including – but not limited to – the installation of a kitchenette on a satellite campus, and longer opening hours for a facility used by over 600 students every week.

Value and confidence

These successes, amongst the many others, proved that our work was valuable. It instilled a new sense of confidence between the SU and the faculties, aided by the close relationships built across our teams. This confidence led to a sort of virtuous cycle, in which the new analysis initiative led to student led change, which led to improved partnership with the university in creating these changes, and back around to gathering more positive feedback.

The SU has been able to lead on and create a multitude of student consultation and engagement exercises with the support of the university. It has provided the SU with a myriad of ways collect student feedback. This way, we can provide the officers, faculties, and other services with the evidentiary support and mandate they need to move forward with our partnerships.

At their session at Membership Services Conference (on August 14th at 14:00) ARU’s rep coordinators will be discussing the in’s and outs of their methods for data collection, analysis, and recommendation creation. They will also go into more detail on successes, and where they plan to go from here.