When the Department for Education’s Curriculum and Assessment Review landed last autumn it didn’t pull any punches.

GCSE and A level exams are here to stay.

The review calls for “evolution, not revolution,” keeping externally set and marked exams as the fairest, most reliable way to assess learning, while trimming their volume by around ten per cent.

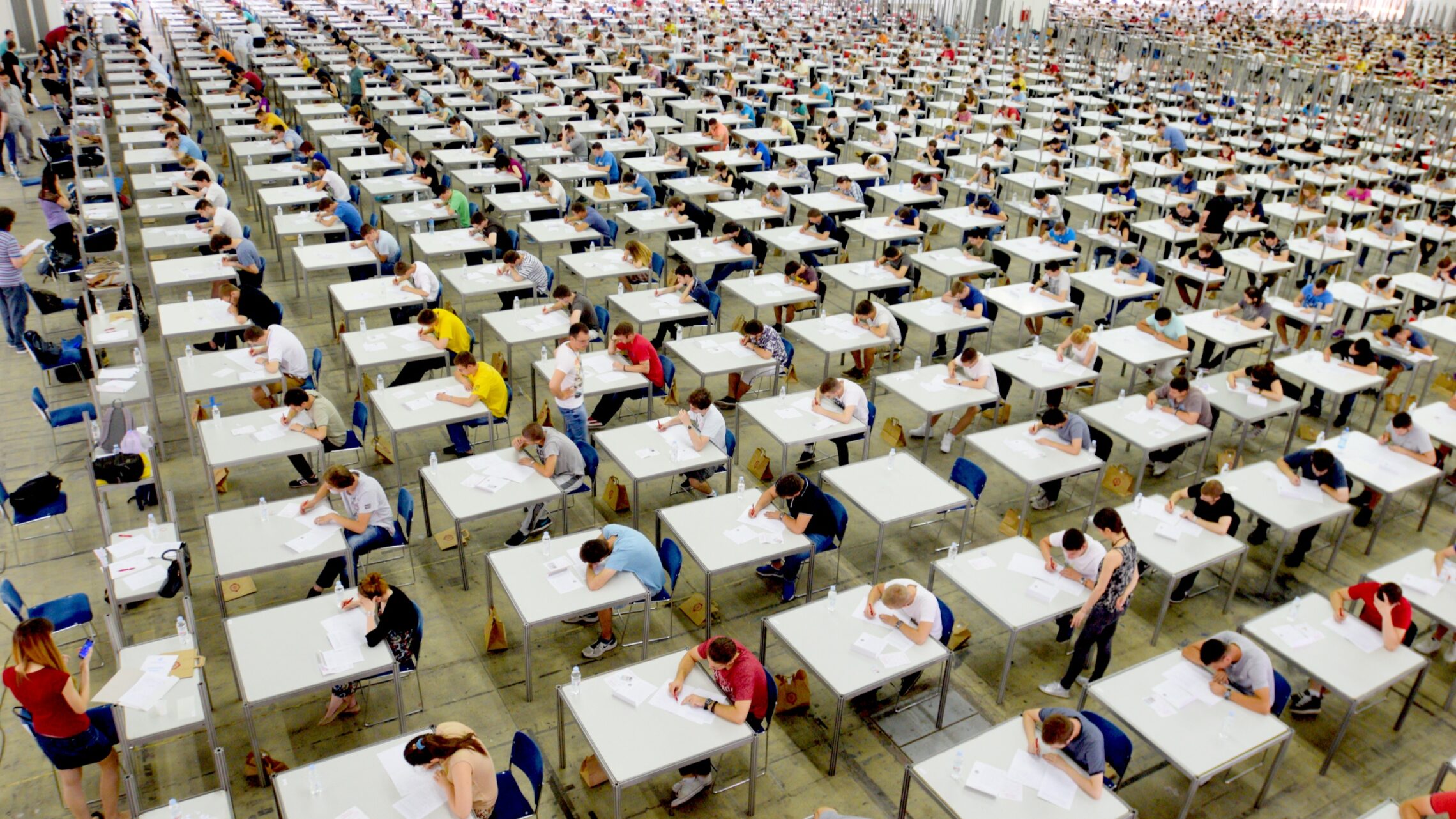

It’s a carefully argued conclusion. Exams ensure consistency, reduce bias, and command public trust. In an age of AI and online assistance, they can also offer a rare guarantee of authenticity.

Yet in higher education, the direction of travel is almost entirely the reverse.

Authenticity

Across universities, assessment reform has been moving rapidly towards “authentic” and applied approaches that mirror the complexity of real-world tasks and test students’ ability to apply knowledge in context. From live client projects and group simulations to reflective portfolios and multimedia submissions, universities are designing assessments that reward creativity, judgement, and collaboration. This isn’t about lowering standards; it’s about broadening what we value as evidence of learning.

Authentic assessment has become a cornerstone of inclusive and engaging practice, supporting academic integrity, wellbeing, and employability. The challenge is that the school system seems to be doubling down on traditional exams, just as universities are diversifying. The result is a widening philosophical gap between the two sectors.

A growing tension

It is useful to reflect on how the Department for Education’s emphasis on standardisation sits uneasily alongside the Office for Students’ expectations of innovation, inclusivity, and skills development. The two agendas pull in different directions, leaving universities to bridge the gap at the point of transition.

It means investing more in transition support, formative assessment, and staff development to help students unlearn habits formed in an exam-dominated culture. In HE, we’ve spent years trying to make assessment joyful again, something students are excited to engage in and that celebrates the applied nature of learning. We don’t want to lose that momentum.

Some could argue that the pendulum has swung too far, and that too much variety risks confusion or grade inflation. But most would recognise the move toward greater authenticity as a necessary response to how students learn, and how they are expected to work.

The human impact

Each autumn, new undergraduates arrive having spent years mastering the art of recall, timing, and precision under pressure. Within weeks, they’re asked to demonstrate synthesis, critical judgement, and originality. For some, this change is liberating. For others, it’s deeply unsettling.

The very skills that secured high grades at school no longer seem to apply. Students (from less advantaged backgrounds in particular) can find the shift disorienting. Not because of ability, but because the “rules of success” have changed. Assessment literacy becomes a hidden curriculum. Without deliberate scaffolding, confidence dips quickly.

One of the most promising bridges is formative assessment. It gives students space to practise, take risks, and receive feedback without the pressure of grading. It helps them rebuild confidence while maintaining the curiosity and enjoyment that authentic assessment aims to foster. It connects the clarity of school assessment with the creativity and reflection valued in higher education.

The bigger picture

This disconnect matters beyond first-year transition. It risks undermining the government’s ambitions for the Lifelong Learning Entitlement and the creation of a truly modular system of study. If learners are to move fluidly in and out of education across their lives, assessment philosophy must evolve alongside curriculum design.

A lifelong learning system built on short courses and micro-credentials assessed through traditional exams would fail to deliver flexibility or inclusion. The principles that underpin fairness at sixteen will not necessarily serve a forty-year-old returning to study. Without alignment, we risk creating a “lifelong learning” system that still assesses as if learning only happens once.

Time for coherence

Bridging the gap between school and university assessment isn’t about choosing sides. It’s about coherence across a learner journey that spans education, work, and reskilling.

That could mean deploying exams where they are genuinely the best tool to measure attainment, but authentic and applied tasks where they better capture intended outcomes. Or creating a learner journey that shifts from reliability to authenticity, not a cliff-edge between sectors. And we should teach learners at school and university to understand the “why” behind each assessment form.

This needs to start early: schools, colleges, universities, and policymakers should build a common language of purpose that connects reliability with relevance.

The Curriculum and Assessment Review was right to call for “evolution, not revolution.” But perhaps that evolution needs to happen in both sectors. If schools continue to emphasise reliability and universities continue to prize authenticity, the disconnection will grow, and students will continue to feel that the rules of learning change every time they cross an institutional boundary.

Assessment is a mirror of what we value. A genuinely world-class education system would ensure that reflection stays consistent, from the first exam paper to the final degree project, and throughout a lifetime of learning.

I’ve often wondered about the idea of “authentic” in “authentic assessment”: it seems to be highly indexical. Here, for instance, we see “authentic” as applied to examinations, to signal that the students visibly discharged assessment themselves. Elsewhere in these pages this very day (“Shame and fear won’t fix students’ AI use”) we see the idea of authenticity as denoting completed assessment not supported by AI.

I understand authenticity as a transitive idea, i.e., something has to be authentic to something else. In higher education assessment, what this tends to mean is that assessment should reflect some aspect of the graduate workplace. For some reason, it rarely seems to mean authenticity to the here-and-now academic work that goes on inside disciplines.

While there is some value to the question being put here, I find it hard to countenance that the problem is caused solely or mostly by final examinations in pre-university qualifications. Formative work and assessment also exists here, and in volume.

I’d agree that in higher education we often default to workplace authenticity, sometimes at the expense of disciplinary authenticity. There’s probably more to unpack there – what gets measured, gets done – and how that shapes the forms of “authenticity” we prioritise.

On the exams point, I wasn’t trying to suggest this is driven solely by final assessment in schools. As you say, there is a lot of formative work. I think the tension I’m getting at is more about the dominant signals of success that students experience over time, and how those shape expectations when they arrive in higher education.

The phrase ‘authentic assessment’ is one of my bugbears. As used in university contexts, it normally seems to be the opposite of authenticity.

On formative, I see its value. I run a course that has a defined marked formative assessment to prepare students for the summative, but I also think that it’s coming up against a real resource constraint in institutions. I can do it because I run one of the smallest, in terms of student count, modules in the course, and this year I’ve got double the number I had last year (due to standard fluctuations in cohort interest), and suddenly the marking of this formative assessment to a standard useful for the students has become a huge task. I can’t see it working as an approach in some of the larger courses.

An excellent article! In Wales, we have a new ‘learner centered’ curriculum whose efficacy is ultimately assessed through high-stakes testing. Given the tanky content of many of these qualifications, teachers have little choice but to teach to the test.

Recently, an attempt was made to introduce a 40% non-examination assessed (NEA) element into some of the new ‘Made for Wales’ GCSE’s. Depressingly, it was later decided that reliability could only be ensured (and workload mitigated) if NEA was undertaken under pseudo-examination conditions!

As far as I understand the UK is an international aberration. The most recent OECD report (The Theory and Practice of Upper Secondary Certification – 2026) plots a global movement towards the sweet spot of multimodal assessment; a balanced combination of formative, viva and recall testing.

When contrasting the performance of level 3 A Level and vocational learners, many of the first year lecturers that I talk to make a stark distinction. Whilst the former tends to have some deep knowledge they are frequently lacking in intellectual curiosity and independent enquiry. By contrast, whereas vocational students sometimes lack a knowledge element, their capacity to learn enables them to quickly overtake their ‘academic’ colleagues.

With the junior end of the labour market stalling throughout much of the UK, we need to challenge what is ostensibly a ‘middle class’ examination fetish and balance the assessment of knowledge with the competencies, capabilities and habits of mind necessary to thrive in near future industries

Is there any quantitative evidence of the extent to which ‘assessment reform has been moving rapidly towards “authentic” and applied approaches’? Such approaches are certainly being trialled and advocated by leadership and educational specialists in many places. But given that assessment structure is often devolved (probably rightly, given the needs or natures of different disciplines) to departments or even individual lecturers, that’s not quite the same as saying that they are being adopted on a massive scale on the ground.

This is far from my experience in the last couple of years. Most of the authentic assessments listed do not provide great assurance of learning when it comes to AI and while many educators would prefer not to, a swing back to invigilated exams in HE seems widespread.

The opening statement is correct: yes, the Becky Francis Review of Curriculum and Assessment does indeed assert that “externally set and marked exams [are] the fairest, most reliable way” to assess learning, citing, as evidence, the consistency achieved by examination sat by large numbers of students simultaneously under controlled conditions, and anonymous marking (https://assets.publishing.service.gov.uk/media/690b96bbc22e4ed8b051854d/Curriculum_and_Assessment_Review_final_report_-_Building_a_world-class_curriculum_for_all.pdf, pp 133, 136).

This evidence, however, is limited to the examination process – controlled conditions and anonymous marking certainly contribute to fairness.

Exams, however, are not solely about process. They’re about outcomes too. And the outcomes – the grades on students’ GCSE, AS and A level certificates – are not fair at all.

Ofqual are most “economical with the truth” in this regard, but they have made two specific statements that are relevant. The first is their acknowledgement that “more than one grade could well be a legitimate reflection of a student’s performance” (https://www.gov.uk/government/news/response-to-sunday-times-story-about-a-level-grades); the second is the evidence, given by Ofqual’s then Chief Regulator, Dame Glenys Stacey, before the Education Select Committee on 2 September 2020, that grades “are reliable to one grade either way” (Q1059, https://committees.parliament.uk/oralevidence/790/pdf/).

Both of these statements are oblique, but both imply that any grade, in any subject, at any level, as printed on a candidate’s certificate is not necessarily the grade the student truly deserves.

This is not fair in any sense.

And, given the ±1 grade uncertainty, the claim that current GCSE and A level exams are “the most reliable way” is highly suspect.

This is an interesting article, as there is on the whole a considerable mismatch between how universities assess students and how the compulsory education sector does. Developing undergraduates’ assessment literacy in the first year is pretty much essential for their future success. A not inconsiderable amount of time is spent in the first trimester ‘re-educating’ students out of the practices they have learned about having to revise, cram, and surface-memorize information for examination-based testing. There are many ways we can develop students’ assessment literacy for example, my paper 10.22159/ijoe.2024v12i5.52282

I’m all in favour of appropriate authentic assessment but can I make a plea for the much older concept of validity? It’s validity that is crucial and we should note that it is quieter possible to have inauthentic assessment that is, nevertheless, valid. Does the task validly assess the learning outcome/s should always be the fundamental question.