The general public would be forgiven if they thought a student’s degree mark and classification was based on an average of all their marks in the courses they took while doing their degree, and that the procedure for calculating this average was similar across all UK universities.

They might also assume that two students who were both awarded first class honours might have achieved similar marks (albeit in different subjects).

However, these reasonable assumptions would be incorrect.

The way UK universities calculate a student’s degree mark varies from university to university, where differences arise in:

- The number of years used in the calculation

- The weightings given to those years (or differential weighting)

- Whether lower marks are ignored (or discounted)

This diversity means that:

- The same set of marks would receive a different classification depending on which university the student attended (or even which degree programme they were on within a given university).

- Students with inconsistent marks are advantaged while those with consistent mark are not.

- We cannot make any meaningful comparisons between universities based on their student’s achievement – which has implications for our understanding of attainment gaps.

Whether different degree marks from different algorithms matters depends on the full extent of this diversity in degree algorithms.

Measuring the variation in UK degree algorithms

In an effort to gauge the range of algorithms used across the sector, in October 2017 Universities UK and GuildHE published survey data from 113 UK universities. However, the picture from the survey results was perhaps a bit piecemeal- in that it identified a range of different weightings applied to years 1, 2 and 3, but which did not include any discounting. The extent of discounting was discussed separately (on page 37 of the report), which suggested that around 36 UK universities used discounting in their algorithms. Again, it was not clear whether these 36 universities also used differential weighting.

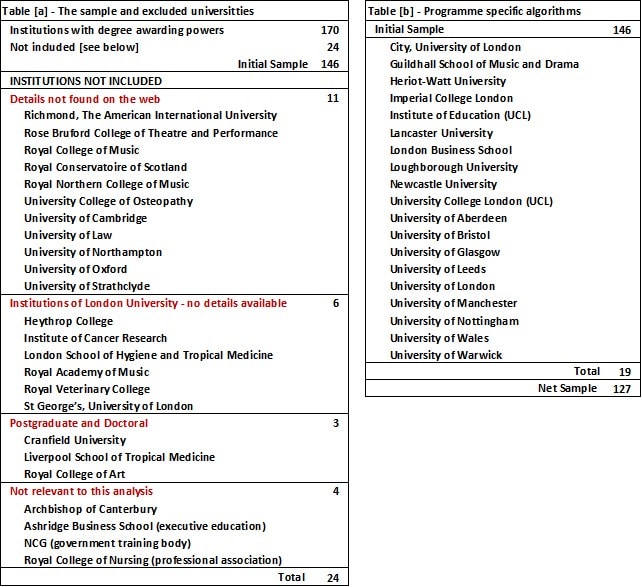

In January 2020, I reviewed the academic regulations for all institutions with degree awarding powers. The method employed was simple (if not tedious) and involved looking at all the academic regulations posted on the institutions’ web sites. Their algorithms were then classified according to the number of years used and whether discounting and differential weighting were applied. The review revealed a surprising diversity in UK degree algorithms:

- Of the 170 institutions with degree awarding powers 24 institutions were excluded because the details were not found on the web, they did not offer undergraduate courses or, were not relevant to undergraduate provision .

- A further 19 institutions were found to be using degree specific algorithms that is to say; there is no university wide algorithm. These universities have general regulations that apply to all programmes, e.g. they might state that the final degree is based on year 2 and 3 (levels 5 and 6 FHEQ) and they lists a range of prescribed weightings. For example, at Newcastle the degree is calculated using all year 2 and 3 marks (i.e. 240 credits), but programmes have a choice of weightings: 50:50, 33:67, 25:75 year 2 and 3 respectively.

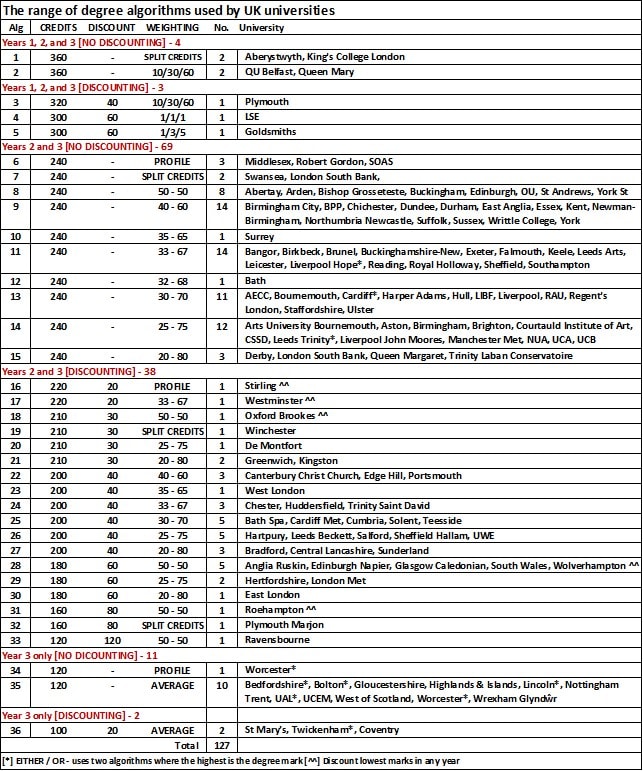

- Of the remaining 127 universities only 7 used all years of study (years 1, 2, and 3), 107 based the degree mark on years 2 and 3, and 13 use only the final year (year 3).

- Six universities use split-credits; here the marks within a year are batched and weighted differently. For example, at Swansea, the best 80 credits at year 3 are given a weighting of 3, the remaining 40 credits at year 3 and the best marks in 40 credits in year 2 are given a weighting of 2, and the remaining year 2 marks a weighting of 1.

- Five universities use profiling where the preponderance principle is applied to determine the student’s classification (as opposed to degree mark). The process begins by ranking the student’s marks and then looks at the proportion of marks at or above a given mark. For example, a student might be awarded a 1st where they have attained 90 credits at 70% or higher and 30 credits at 60% or higher – this might apply to all year 2 and 3 marks, or part of year 2 and all of year 3.

- Nine universities use two different algorithms (Either / Or) where the final Classification is determined by the algorithm with the higher average mark. Generally, the first algorithm uses a broader range of marks (e.g. all credits from years 2 and 3), where the second algorithm is narrower and has a higher proportion of year 3 marks, or in the case of 6 institutions, uses only year 3 marks (which is more forgiving to those students with better marks in year 3).

- Of the remaining 116 universities, 76 use differential weighting and, 40 use discounting combined with differential weighting.

Table 1

The full range of algorithms is shown in Table 2. Significantly, no university takes the straight average of all years. The closest to this overall average is the LSE algorithm, (algorithm 4) but which ignores 60 credits with the lowest marks in year 1.

Table 2 also shows that there is further variety within a given algorithm. For example, some universities discount the lowest marks in any year (see marker ^^ in table 2) – which makes the algorithm almost unique to the student. Likewise, in algorithm 26 two of these universities (UWE and Hartpury) allow the unused credit from year 3 to be “counted towards the second 100 credit set of best marks” (i.e. year 2). That is to say and, depending on the student’s marks, up to 40 credits in year 2 could be discounted.

This review found yet more variation in terms of the degree classification boundary marks. When it comes to 1st the range stretches from 68% (Bradford University) to 70%, with 69.5% being very common. Similarly, the range of marks that would attract a borderline consideration can range from 2.5 to 1.5 percentage points below a given boundary mark.

Table 2

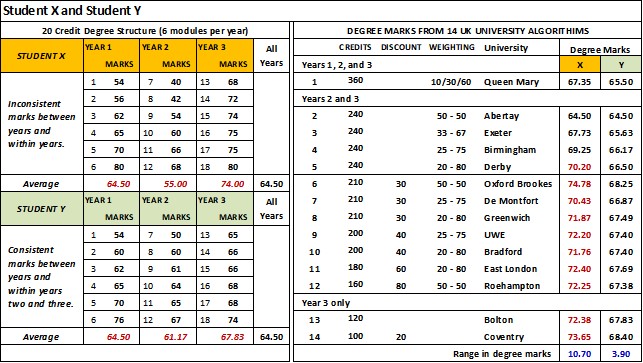

Why it matters – student attainment comparison

We can illustrate the implications of this diversity in degree algorithms has on students’ attainment using a worked example. Table 3 lists the module marks for two students X and Y on a degree programme using 20 credit modules. Student X’s marks could be described as inconsistent – with big differences between the yearly averages, whereas Student Y’s yearly marks are more consistent. It is notable however that in this example, both students have the same year 1 average (64.5 per cent), the same average for years 2 and 3 combined (64.5 % – see the mark for Abertay) and the same average across all years again 64.5 per cent.

The table shows how 14 different algorithms use these module marks to derive the students’ degree marks and demonstrates that when combined differential weighting, discounting, and different counting years can have a significant effect on the student’s final degree mark and classification. To reiterate, these differences mean that;

(a) The same set of marks would receive a different classification depending on which university the student attended (see Student X).

(b) Students with inconsistent marks are advantaged while those with consistent mark are not (compare Student Y to Student X).

For Student X the range in their degree marks is 10.7 percentage points, they would receive a 1st (76.65 per cent) had they gone to Coventry, but a 2:1 (64.50 per cent) had they studied at Abertay. We might also wonder whether Student X’s marks reflect what those outside the sector (e.g. employers, parents, and media) might commonly think of as the likely marks that make up a 1st.

Conversely, the range in degree marks for Student Y is only 3.9 percentage points and they would have received a 2:1 no matter what university they attended (see appendix – table D). However, had Student Y’s average marks for years 2 and 3 been higher e.g. 68 per cent, the spread of degree marks (3.9 percentage points) might have resulted in some algorithms returning a degree mark above 70 per cent.

Table 3

The unfairness of the current arrangements speaks for itself. This inequity probably drives QAA recommendations that institutions reduce the variation within the institution (although the QAA is less vocal about the variation between institutions). Similarly, the complexity of some of these algorithms makes it challenging for students to set target marks or to gauge how they are doing in their studies.

There is however one major issue that has not been considered. Namely, the impact this variety in algorithms (or indeed any widespread changes in them) has on any comparative analysis involving degree outcomes, principally, attainment gaps based on the proportion of good honours (issue (c) above).

Why it matters – comparisons between universities

Under the current system, the traditional classifications are not standard measures of attainment. If asked “when is a First a First?” we can only say … “Well it depends on which university the student went to.” Furthermore, as it currently stands where some student’s achievements can only be described as ‘algorithm assisted’, we are not comparing like with like. We can demonstrate the problem using two media based league tables.

In the Complete University Guide 2020 league table, Coventry’s proportion of good honours is 76.1 per cent, which compares to 75.7 per cent for Brunel. Yet the two algorithms are very different (algorithm 36 and 11 respectively in see table 1), such that the Brunel’s achievement says something different about its staff and students – but which, in the absence of any unadulterated averages, is near impossible to quantify.

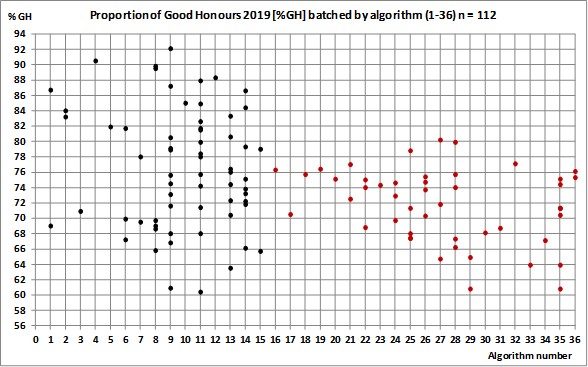

As figure 1 shows, it is only with some understanding of the range in degree algorithms that we could start to identify meaningful comparisons but only within a particular algorithm.

More generally, figure 1 suggests that discounting (algorithms 16 onwards) is perhaps concealing the true extent of student achievement (or lack thereof) in a large number of universities. However, this conclusion rests on the assumption that generally, the discounted marks are lower than the counting credits, and that year two marks are also lower than the final year marks.

Figure 1

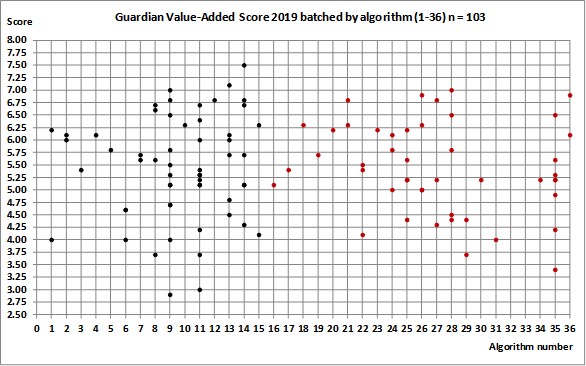

Likewise, the Guardian League table and it’s value added score which “… compares students’ degree results with their entry qualifications, to show how effectively they are taught. It is given as a rating out of 10.”

In the 2019 table, Sheffield (algorithm 11) and Nottingham Trent (algorithm 35) both have a value added score of 5.1, yet the algorithms are very different, such that we cannot reasonably make a comparisons between these two algorithms.

Figure 2

At a national/policy level this variety in algorithms also makes it difficult formulate informed policy. A case in point would be accurate measurement of attainment gaps based on the proportion of good honours across different groups of students. Currently, we cannot know if these gaps are being reduced or increased because of differences (or changes) in university degree algorithms.

A proposal

The problem defines the solution: we need a standard measure of attainment. If higher education policy is to be better informed there has to be a “levelling up” in the measurement of student attainment.

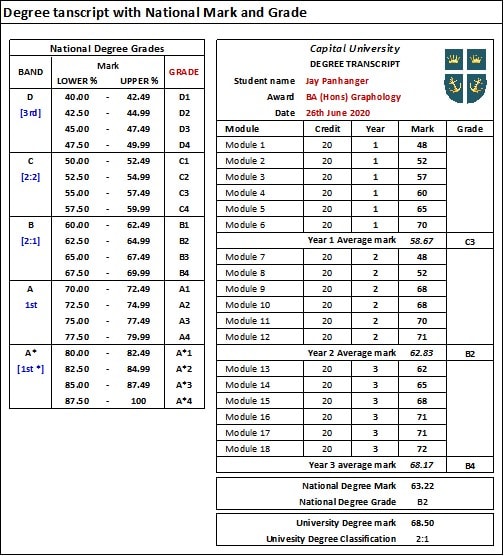

To this end, I would propose a national degree mark and grade based on the students’ average marks across all years. This mark and the yearly averages would be recorded in the student’s transcript alongside the university’s mark and classification (see table 4). These marks would also be supplied to HESA and used in its annual report on student attainment.

Table 4

Importantly, universities would not have to change their current algorithms nor their academic regulations (their autonomy remains intact). That is to say, nothing is ‘being changed’, it’s simply ‘being added to’. Indeed, as an unadulterated average, this national degree mark would not require any regulations – it is what it is.

For universities, this proposal is also cheap to implement, they have the data already, it only requires changes to the coding of existing data bases to collate, record and publish this data. In all respects, this proposal is a simple bureaucratic change in the reporting of student achievement as such it does not really require any extended period of consultation with universities.

The data underlying this analysis is available as an excel file.

Having done similar analysis (more granular, with a smaller set of institutions) I would agree that the huge variation, and the extent to which that variation is defensible, is genuinely interesting.

However, I’m not sure that complete comparability is achievable or desirable (teaching and assessment practice, content and the pass mark/marking criteria all have a significant impact, and are not consistent), even if this does make (more of a) nonsense of the OfS’ treatment of statistics. Nor am I certain that the simple, elegant solution posed entirely solves the problem. Others matters to consider are: the extent to which modules are permitted to be resat or reassessed (what level, how many times) and whether the results of those resits are capped; whether students are currently encouraged to experiment in non-counted first years or optional modules; the approach taken to condonement or compensation (eg some HEIs replace module marks with a pass Mark); the extent to which students are permitted to study lower level modules during the higher levels of their degree; whether assessment scores are changed in light of mitigating circumstances or not.

As long as institutions control their own curriculum, there will be variation. I’d favour retaining autonomy, and it would be hard(er) for the OfS to achieve the market diversity it seeks if it told everyone to behave the same way (though it has considerable experience in, and aptitude for, cognitive dissonance so this may not be a barrier in practice). What I think we could reasonably see is a shift away from some of the potentially sharper and less defensible practices that (appear to) only benefit the student: for example, using the ‘best of’ multiple algorithms; discounting or under-weighting only lower marks (rather than lower and higher, or marks from non-core modules).

As the article demonstrates there is a lot of variation in the degree algorithms used across the sector and in some cases within an institution, there is definitely a case for increased simplicity and and transparency for students however the analysis does not account for the differences in teaching, learning and assessment across institutions for which algorithms often look to account for. Even something as simple as module size and the assessment strategies of those modules can impact a students performance with algorithms being a part of overall academic regulations which apply to students. This is before we start to consider learning outcomes and how institutions look to ensure students have met them within a programme.

Whilst the solution presented claims to be an add on, it will not account for nuances and as an over simplistic solution will likely have a number of unintended consequences for institutions and students.

Hello Andy – you are completely right about the wide variety in the reassessment of modules, capping of marks, condonement and compensation and much more besides (e.g. in particular the rules for ‘uplifts’). Indeed, to record and classify this variety would have taken another two months of work. Likewise, I agree that as long as institutions control their curriculum there will be variation – which is only right and proper. What I would like to stress is that my proposal is not asking for any of this to be changed or harmonised (but it can be if universities so wish). The national degree mark and grade are simply an alternative accounting – which in the scheme of things will still reflect the diversity in curriculum (which will be interesting to see). In the meantime, as a simple average, all stakeholders; students, parents, employers and the media, will easily understand this degree mark and grade.

Hello Phil – as with Andy’s comments above, I agree there is variation in teaching and assessments across institutions, which is a good thing. You also raise good point about module sizes – my own simulations show this can be very relevant where discounting is used.

I would however quibble about whether all algorithms are configured to account for these differences – as most regulations do not offer any rationale (to students or staff) for ignoring year 1, discounting and differential weighting. Which leaves us asking why are we doing this?

In the meantime, I think we can overlook how students respond to the regulatory framework of their university (once they have understood them). For instance, discounting creates perverse incentives – if a year or module ‘doesn’t count’ (or counts less), then why bother. Indeed, it is my (long) experience that a small but significant proportion of failing students got into difficulties because they made the wrong decision based on a misinterpretation of the academic regulations – itself an unintended consequence.

In this regard I have given much thought to second-guessing any ‘consequences’ of this national degree mark and grade and will hopefully be exploring this in a later piece for WONKHE. My initial thoughts are that discounting and differential weighting are treating a symptom (e.g. inconsistent marks, or lower marks at year 2 compared to year 3 – real issues to students) without tackling the cause. Publishing yearly averages might encourage universities to look closer at their teaching provision across years 1 and 2 and allocate more resources that then help all their students to realise their full potential. For the student – look after the national degree mark and the classification will look after itself (whatever university they are in).

Thanks David, I agree with what you’ve said, and didn’t mean to imply you were implying we needed greater standardisation.

Your proposal, as you said, is about an alternative measure which would avoid the need for standardisation. My point was more that a(n admittedly smaller) number of the wider factors which are not part of but which directly affect a student’s final classification, will also affect the way individual module marks are determined, and also therefore your affect your simple alternative average – permitting reassessment even if a module is passed and/or not capping modules and/or permitting credits at lower/higher levels and/or changing marks via condonement/mitigation (to pick just a few examples from those I’ve seen in a relatively small sample) makes a significant difference to module marks.

On this basis, I think there’s a risk that even a simple, comparable solution such as the one which you have proposed risks presently an alternative measure which is still far from comparable (even if it is presented as such).

I think there’s also an unanswered question about the extent to which academic staff adapt to the situation in which they work: to what extent is institutional marking culture driven by a desire to ensure broadly comparable outcomes with broadly comparable institutions regardless of differences in algorithm (and everything what goes with the algorithm).

Hello again Andy

If I have read you comment correctly you are making the following points: (i) that the regulations and procedures around individual module marks can have a significant impact on a student’s classification. Particularly in the case of marginal degree marks which are uplifted using the preponderance principle (where there could be pressure on staff to avoid awarding marks close to a boundary e.g. 59% or 69%), or more generally whether the mark is capped at 40% etc. This being so then (ii) an overall average yearly mark will be affected in a similar manner. This then means the overall average mark (national degree mark) is not as ‘comparable’ as it might appear [yes?].

All very true – but let’s remember the difference is being generated by the regulations surrounding module marks (and these vary as much as the algorithms do) which is then exacerbated further by the variation in the algorithms. However, the national degree mark confines or corals this diversity in regulations at module level, such that I would argue that the national degree mark has more ‘comparability’ than the current arrangements.

Picking up on Phil’s point about unintended consequences – if the national degree mark is adopted I can see a situation developing where universities start to change the regulations surrounding module marks. However, to make resit opportunities easier (or more numerous) will probably create a resit culture – which is very costly for universities to accommodate (here I speak from experience).

As to the unanswered question of markers seeking comparability (with similar institutions) in their marking, well this should come down to consistency in the use of clear and unequivocal marking criteria (which is another story).

Interesting article with some valuable analysis. However, we need to recognise the benefits to variation to appreciate the full complexity of the situation.

Three examples:

i) in a medical or other professional course, you could attain highly on average but, if you’re failing key modules that determine safety of practice, this would not be considered good performance.

ii) in arts degrees it is understood that you advance your practice year on year, building to a final “piece.” Surely it makes more sense for the weighting to be shifted to the final year in this case?

iii) having a poor performing module discounted can be a safety net for students who wish to take a risk and study a language that they never have before, without it affecting their final grade.

Variation can encourage good practice, not simply encourage gaming or laziness. It’s also evident that a one- size fits all approach does not accommodate the differences in subjects above.

However, I do agree that many of these algorithms need to be simplified and easier to understand for students and, where there is justification, for this to be clearly given.

Hi David – Yes, that is broadly what I’m saying. But i was actually surprised at how much variation module-level rules could have on your proposal. To give two examples which would impact at different levels (again, this is from a much smaller sample than you used for your study of algorithms themselves):

At lower levels of study: take two, large pre-92s in the same region. Both permit resits at Levels 4 and 5. At one all resits are uncapped, with the resit mark replacing the original failing mark; at the other, all resits are capped, and capped at 30 (ten marks below the pass mark). For students who fail one or more modules, this will produce wildly different module results (and therefore final averages).

At higher levels of study: in the final year of an Integrated Master’s degree, some institutions have a pass mark of 50, and make use of Postgraduate Assessment Criteria which award a mark of 50 for meeting the learning outcomes for a module (scaling thereafter). Some institutions have a pass mark of 40, and use Undergraduate Assessment criteria which aware a mark of 40 for meeting the learning outcomes for a module (scaling thereafter). Students who meet or exceed the LOs for a given module will receive marks up to 10pp difference (assuming marking is comparable).

Thanks Leigh

You are (I think) questioning the comparability of the national degree mark and grade, or more generally the whole notion as to whether comparability across UK degrees is desirable (which seems to be a common thread in the comments made so far).

So to be clear: I accept (unreservedly) that there is a difference between degree programmes, furthermore, I would not support any sort of ‘national curriculum’ being applied to the UK Higher Education sector (professional accreditation aside – see below).

This is the old debate about whether a degree in History from Oxford is ‘comparable’ to one from Oxford Brookes – where the clear answer is ‘no they are not’. The research interest of the staff alone probably shapes the differences in the learning outcomes between the two programmes. In much the same way a degree in Medicine is not the same as on in English Literature.

What I am arguing is that the difference should not be down to how the mark and classification are calculated. As it currently stands, a 1st from Oxford is not the same as a 1st from Oxford Brookes – because the two universities calculate the degree mark/classification differently. This proposed unadulterated average is explicit and allows comparisons – a 70% is 70%, and leaves in place the more interesting discussion of whether one university degree programme is ‘better’ or perhaps more relevant than another. That is to say, this national degree mark and grade (NDM/G) gives us the opportunity to discuss if not celebrate the diversity in UK HE provision.

Turning to the specific examples you mention

(i) Medicine and Professional courses. I am no expert on accredited programmes – but I suspect they are the closest we can get to a ‘national curriculum’. This being so, it seems counter intuitive to allow discounting and differential weighting to compromise any comparisons between future practitioners within the same discipline (here we could certainly do with the views of those who hire these potential practitioners).

(ii) Arts degrees. Again, I am no expert. However, given that all degree programmes have clearly stated learning outcomes, level by level, where each is intimately linked with some notion of progression to the overall learning outcomes, discounting and differential weighting privileges one set of learning outcomes over the other (when I would assume they are equally important). In this regard discounting can then ignore some of these learning outcomes altogether – and in an arbitrary way. I also worry that higher weightings on the final year can be particularly harsh on those students whose final year marks are a bit of a disaster (for whatever reason).

(iii) Taking Risks. This is interesting and yes we should encourage students to ‘step outside’ their chosen programme by, as you suggest, taking a language. My view here is whether the possible ‘extra-curricular’ options should be included in the programme specification (and thus counted) in the first place.

This leads us to the notion of ‘additionality’ where students are encouraged to take on extra (accredited) courses that then are listed in the Higher Education Achievement Report (HEAR) and which can build their CVs (e.g. courses in Excel etc.). It’s here universities could probably do more in (a) offering such courses, or relaxing the rules to allow students to take (non-counting) modules in other programmes and (b) facilitating the students to take up such courses (in much the same way we currently facilitate sporting activity).

To pick up on your final point about ‘gaming and laziness’ its my experience that when students understand discounting and differential weighting their decision to ‘take the foot of the pedal’ is a rational one based on the costs and benefits. The risk is that without clear direction the regulations (if not the calculation) can be misinterpreted. This is the particularly the case with ‘discounting’ – which can be wrongly interpreted as being allowed to ‘drop a module’, i.e. the module can be failed and does not need to be retaken later – which has dire consequences for progression.

Thanks Leigh

You are (I think) questioning the comparability of the national degree mark and grade, or more generally the whole notion as to whether comparability across UK degrees is desirable (which seems to be a common thread in the comments made so far).

So to be clear: I accept (unreservedly) that there is a difference between degree programmes, furthermore, I would not support any sort of ‘national curriculum’ being applied to the UK Higher Education sector (professional accreditation aside – see below).

This is the old debate about whether a degree in History from Oxford is ‘comparable’ to one from Oxford Brookes – where the clear answer is ‘no they are not’. The research interest of the staff alone probably shapes the differences in the learning outcomes between the two programmes. In much the same way a degree in Medicine is not the same as on in English Literature.

What I am arguing is that the difference should not be down to how the mark and classification are calculated. As it currently stands, a 1st from Oxford is not the same as a 1st from Oxford Brookes – because the two universities calculate the degree mark/classification differently. This proposed unadulterated average is explicit and allows comparisons – a 70% is 70%, and leaves in place the more interesting discussion of whether one university degree programme is ‘better’ or perhaps more relevant than another. That is to say, this national degree mark and grade (NDM/G) gives us the opportunity to discuss if not celebrate the diversity in UK HE provision.

Turning to the specific examples you mention

(i) Medicine and Professional courses. I am no expert on accredited programmes – but I suspect they are the closest we can get to a ‘national curriculum’. This being so, it seems counter intuitive to allow discounting and differential weighting to compromise any comparisons between future practitioners within the same discipline (here we could certainly do with the views of those who hire these potential practitioners).

(ii) Arts degrees. Again, I am no expert. However, given that all degree programmes have clearly stated learning outcomes, level by level, where each is intimately linked with some notion of progression to the overall learning outcomes, discounting and differential weighting privileges one set of learning outcomes over the other (when I would assume they are equally important). In this regard discounting can then ignore some of these learning outcomes altogether – and in an arbitrary way. I also worry that higher weightings on the final year can be particularly harsh on those students whose final year marks are a bit of a disaster (for whatever reason).

(iii) Taking Risks. This is interesting and yes we should encourage students to ‘step outside’ their chosen programme by, as you suggest, taking a language. My view here is whether the possible ‘extra-curricular’ options should be included in the programme specification (and thus counted) in the first place.

This leads us to the notion of ‘additionality’ where students are encouraged to take on extra (accredited) courses that then are listed in the Higher Education Achievement Report (HEAR) and which can build their CVs (e.g. courses in Excel etc.). It’s here universities could probably do more in (a) offering such courses, or relaxing the rules to allow students to take (non-counting) modules in other programmes and (b) facilitating the students to take up such courses (in much the same way we currently facilitate sporting activity).

To pick up on your final point about ‘gaming and laziness’ its my experience that when students understand discounting and differential weighting their decision to ‘take the foot of the pedal’ is a rational one based on the costs and benefits. The risk is that without clear direction the regulations (if not the calculation) can be misinterpreted. This is the particularly the case with ‘discounting’ – which can be wrongly interpreted as being allowed to ‘drop a module’, i.e. the module can be failed and does not need to be retaken later – which has dire consequences for progression.

Again Andy you are right! Currently, the variation in module regulations mean that the national degree mark (and grade) will be different (at least to start with).

However, what’s missing from your two undergraduate examples is the rationale behind each regulation – allowing an uncapped mark or, capping the resit mark at 30%. I expect in the latter the university uses discounting and knows this procedure will ignore the 30% mark. I would however advise this university to revisit this regulation: if 30% is a fail, then the learning outcomes of that module have not been met – which could then mean the student has (probably) not met the overall learning outcomes of the programme. Otherwise, such a fail would (normally) mean the student is not eligible for the Honours designation (which is usually awarded to students who have passed 360 credits). I would worry that students in this university might not fully understand the implications of the 30% capped mark.

The point is that using reasonable and easily understood average, we can then start to look at the regulations surrounding modules and ask ourselves do we have these ‘right’, can we justify them? (In much the same way this article has done in terms of the current approaches to calculating final degree marks and classification)

Turning to the integrated masters, its my understanding that in the similar way universities offer interim diplomas and certificates in higher education (levels 4 and 5 respectively), those on integrated masters will be awarded an interim degree (presumably with Honours) – which the national degree mark and grade can accommodate. While I am not an expert, the variation in pass marks (etc.) on the final masters’ element needs to be looked at – in particular the rationale (or lack of) behind any particular regulation. It’s my understanding that on most masters the pass mark is 50% – which in part distinguishes the masters programmes from undergraduate programmes.

Fascinating and thought provoking discussion and opens up a plethora of questions. Would be very interesting to see comparison betweek UK algorithms and those used in other countries.

Again I am no expert, but it looks like the rest of the world uses variations of the Grade Point Average – particularly in the USA and Asia. Europe also has a unified system – European Credit Transfer system (ECTS), where the general belief is that “principles of justice and fairness, deemed central to academic freedom, are best upheld by the use of a unified grading system at national and European levels” (page 1). (see: Karran, T. (2005), Pan-European Grading Scales: Lessons from National Systems and the ECTS Higher Education in Europe, Vol. 30, No. 1, April 2005. Higher Education in Europe is available online at: http://www.informaworld.com)

Ever since I came across “Degrees of freedom: An analysis of degree classification regulations” (Curran & Volpe, 2003) I’d hoped to find five minutes (!) to repeat a similar exercise so am very pleased you’ve managed to do something like it (thank you!).

I think the Degree Outcomes Statements will prove interesting in this regard – we will be addressing your queries (i.e. I would however quibble about whether all algorithms are configured to account for these differences – as most regulations do not offer any rationale (to students or staff) for ignoring year 1, discounting and differential weighting) in our DOS.

I look forward to your analysis (but I confess I do not know what a ‘DOS’ is). I do know that of all the regulations I looked at the rationale for discounting and differential weighting was ‘thin on the ground’. Some regulations talked of ‘exit velocity’ (as a justification of a higher weighting on the final year) but none I read (or at least comprehended) explained discounting (including the omission of year one marks).

Hi again Davd. Interestingly, no, neither HEI discounts. I suspect the pass mark is set at 30 because the HEI compensates marks of 30 (i.e. deems them to be passes, as long as they aren’t core and other criteria are met), and runs compensation before resits – the cap of 30 thereby avoids advantaging students who resit a mark of below 30 and resit over those who receive automatic compensation.

In fact, the widespread use of compensation – which typically comes with a minimum threshold and other criteria, such as an average of 40 for the year, meaning narrow fails can be deemed passes – and condonement – where a certain proportion of modules can sometimes just be failed – means that the vast majority of universities graduate (some) students with fewer than 360 credits (before any mitigating circumstances are taken into account). These kind of practices remain an explicit expectation within the Maths and Physics Subject Benchmark Statements (which argue that students in those disciplines should not be expected to be good at or pass all areas within those programmes). However, these failing marks frequently count towards the final degree classification (i.e. they are not discounted), meaning that this kind of practice doesn’t affect the degree class, just the module marks, student progression and the proposed overall module average.

I still worry that an additional measure will not be comparable even if presented as such, and just becomes one more point of difference to explain (similarly GPA has been suggested as a solution to the issue of ‘Grade Inflation’, which comes with the same challenges, and is of course widely used in North America, which is the source of the majority of the literature looking at the problem of … grade inflation). However, I do look forward to everyone explaining their deree classification algorithm in one place as part of the work on Degree Outcomes Statements, as that will at least make it easier to undertake analysis in future.

You are correct that most institutions requie a pass mark of 50 for master’s, but not all do (some have 40, but some have 50 for standalone master’s and 40 for integrated masters). However, the pass mark is an arbitrary number assigned to the fact that students have met the learning outcomes. If LO are comparable on Master’s, then instititions with a pass mark of 50 do not have higher academic standards, they have lower academic standards (as meeting the LOs at one HEI will net you 50, whereas doing the same at another will net you 40, with a resulting negative impact on degree classification).

Hello Andy thanks for the clarifications regarding compensation and condonement (a minefield in its own right).

I unreservedly accept that while there is variation in the regulations covering modules this suggested additional measure will not offer perfect ‘comparability’ (and can’t). However, we need to remind ourselves where the current variety in regulations manifests itself:

(i) at module level when determining marks and passes

(ii) at degree level when determining the overall classification

The proposed national degree mark and grade is designed to tackle the issues and implications that arise in (ii), which are bigger in magnitude than the issues that arise in (i).

Its here universities face a weights and measures problem. This I can explain by relating a short story. I was explaining my work on algorithms to a group of people in my local pub, and I showed them the example of student X and Y (as above). It was a retired fighter who coined the phrase ‘algorithm assisted’ when looking at the 1st from the 10 universities in the table. A qualified (working) mental health nurse was more forthright and described these marks as ‘Fake Firsts’. The others around the table thought it was ridiculous, unbelievable, and bonkers.

This impromptu workshop really demonstrated to me that universities are too self-absorbed and are (in my view) taking liberties with the concept and privileges autonomy confers on them. While they should vigorously defend the diversity in curriculums that autonomy encourages, they need to address (as a matter of urgency) how students’ achievements across these diverse curriculums are described and measured.

In this context, I read with interest Natalie Day and Chris Husbands recent WonkHE article (Do universities matter in post-Brexit Britain), where they quote the Policy Exchange report “that universities are “out of touch” and have ”lost the trust of the nation” in some critical areas. There is a gap, argue the authors, between how the sector sees itself and how others see the sector”.

As far as I can see this describes well the views of the customers at my local pub. To this end, I believe that the adoption of a national degree mark and grade would greatly help universities to “fulfil their more civic missions.”

Hello Andy thanks for the clarifications regarding compensation and condonement (a minefield in its own right).

I unreservedly accept that while there is variation in the regulations covering modules this suggested additional measure will not offer perfect ‘comparability’ (and can’t). However, we need to remind ourselves where the current variety in regulations manifests itself:

(i) at module level when determining marks and passes

(ii) at degree level when determining the overall classification

The proposed national degree mark and grade is designed to tackle the issues and implications that arise in (ii), which are bigger in magnitude than the issues that arise in (i).

Its here universities face a weights and measures problem. This I can explain by relating a short story. I was explaining my work on algorithms to a group of people in my local pub, and I showed them the example of student X and Y (as above). It was a retired fighter who coined the phrase ‘algorithm assisted’ when looking at the 1st from the 10 universities in the table. A qualified (working) mental health nurse was more forthright and described these marks as ‘Fake Firsts’. The others around the table thought it was ridiculous, unbelievable, and bonkers.

This impromptu workshop really demonstrated to me that universities are too self-absorbed and are (in my view) taking liberties with the concept and privileges autonomy confers on them. While they should vigorously defend the diversity in curriculums that autonomy encourages, they should however address (as a matter of urgency) how students’ achievements across these diverse curriculums are described and measured.

In this context, I read with interest Natalie Day and Chris Husbands recent WonkHE article (Do universities matter in post-Brexit Britain), where they quote the Policy Exchange report “that universities are “out of touch” and have ”lost the trust of the nation” in some critical areas. There is a gap, argue the authors, between how the sector sees itself and how others see the sector”.

As far as I can see this describes well the views of the customers at my local pub. To this end, I believe that the adoption of a national degree mark and grade would greatly help universities to “fulfil their more civic missions.”

Hello Andy thanks for the clarifications regarding compensation and condonement (a minefield in its own right).

I unreservedly accept that while there is variation in the regulations covering modules this suggested additional measure will not offer perfect ‘comparability’ (and can’t). However, we need to remind ourselves where the current variety in regulations manifests itself:

(i) at module level when determining marks and passes

(ii) at degree level when determining the overall classification

The proposed national degree mark and grade is designed to tackle the issues and implications that arise in (ii), which are bigger in magnitude than the issues that arise in (i).

Its here universities face a weights and measures problem. This I can explain by relating a short story. I was explaining my work on algorithms to a group of people in my local pub, and I showed them the example of student X and Y (as above). It was a retired fighter who coined the phrase ‘algorithm assisted’ when looking at the 1st from the 10 universities in the table. A qualified (working) mental health nurse was more forthright and described these marks as ‘Fake Firsts’. The others around the table thought it was ridiculous, unbelievable, and bonkers.

This impromptu workshop really demonstrated to me that universities are too self-absorbed and are (in my view) taking liberties with the concept and privileges autonomy confers on them. While they should vigorously defend the diversity in curriculums that autonomy encourages, they should however address (as a matter of urgency) how students’ achievements across these diverse curriculums are described and measured.

In this context, I read with interest Natalie Day and Chris Husbands recent WonkHE article (Do universities matter in post-Brexit Britain), where they quote the Policy Exchange report “that universities are “out of touch” and have ”lost the trust of the nation” in some critical areas. There is a gap, argue the authors, between how the sector sees itself and how others see the sector”.

As far as I can see this describes well the views of the customers at my local pub. To this end, I believe that the adoption of a national degree mark and grade would greatly help universities to “fulfil their more civic missions” and close this gap

I would expect most people would want a degree to show how much someone has learnt by the end of their degree, not how much they knew in the first year of the degree. Shouldn’t it be possible for someone to start out with lower skills and to catch up with their peers by the end of their degree?

This suggests to me that the final year should be weighted much higher and the first year discounted completely.

Hello Andy thanks for the clarifications regarding compensation and condonement (a minefield in its own right).

I unreservedly accept that while there is variation in the regulations covering modules this suggested additional measure will not offer perfect ‘comparability’ (and can’t). However, we need to remind ourselves where the current variety in regulations manifests itself:

(i) at module level when determining marks and passes

(ii) at degree level when determining the overall classification

The proposed national degree mark and grade is designed to tackle the issues and implications that arise in (ii), which are bigger in magnitude than the issues that arise in (i).

Its here universities face a weights and measures problem. This I can explain by relating a short story. I was explaining my work on algorithms to a group of people in my local pub, and I showed them the example of student X and Y (as above). It was a retired fighter who coined the phrase ‘algorithm assisted’ when looking at the 1st from the 10 universities in the table. A qualified (working) mental health nurse was more forthright and described these marks as ‘Fake Firsts’. The others around the table thought it was ridiculous, unbelievable, and bonkers.

This impromptu workshop really demonstrated to me that universities are too self-absorbed and are (in my view) taking liberties with the concept and privileges autonomy confers on them. While they should vigorously defend the diversity in curriculums that autonomy encourages, they should however address (as a matter of urgency) how students’ achievements across these diverse curriculums are described and measured.

In this context, I read with interest Natalie Day and Chris Husbands recent WonkHE article (Do universities matter in post-Brexit Britain), where they quote the Policy Exchange report “that universities are “out of touch” and have ”lost the trust of the nation” in some critical areas. There is a gap, argue the authors, between how the sector sees itself and how others see the sector”.

As far as I can see this describes well the views of the customers at my local pub. To this end, I believe that the adoption of a national degree mark and grade would greatly help universities to “fulfil their more civic missions” and close this gap

PS apologies for this late reply but I have had problems loading in replies

Hello Dave

Clearly, this is a view shared by many – after all, in the sample, I looked at only 8 universities weighted second and third year equally, and only 7 include the first year.

However, it’s worth remembering that the proposed national degree mark and grade (NDM+G) will not replace the algorithms currently used by UK universities. Rather it complements them and I think we will see a general improvement in the students’ academic performance.

To explain: Its my experience that for students there are two aspects to learning (at university level) where the first is actually internalising that new understanding and the second is being able to express that new understanding, where the latter is perhaps more of a skill than an aptitude. It is my firm belief, that putting the first year on par with year two and three should incentivise the students use the first year to hone their skills in expressing their learning – which will pay dividends later.

Otherwise, there is the issue of the student who has a fairly disastrous final year (for whatever reason), where their marks are perhaps lower than those of year 1 and 2. Here the heavier weighting on the final year just makes a bad situation worse. On the other hand the NDM+G in recognising earlier achievements (equally) compensates for these unfortunate events.