It’s been a busy few months for UK higher education, so perhaps it’s not surprising that HEFCE’s 27 October 2017 release of the POLAR4 dataset went largely unremarked. Indeed, if you search for the term, the top three hits are from HEFCE’s own website and the next three are, at the time of writing, a producer of heart-rate monitors, an expedition from the World Arctic Fund, and (my personal favourite) a songwriting duo with a penchant for Christmas music.

POLAR 4 all

But in our ever-more-metricised environment, we should pause and consider what this new dataset means for universities. Data nerds and widening participation professionals will be familiar with POLAR.

For those who aren’t, it’s a measure created by HEFCE to articulate the proportion of 18 and 19-year-olds in a particular geographic area who progress to university. First produced in 2005, based on university progression data from 1994 to 2000, POLAR was subsequently updated in 2007 and 2012 to ensure that the changing profile of geographic areas was captured.

The measure is divided into quintiles, with quintile 5 being the most ‘advantaged’ areas (with the highest progression levels), and quintile 1 being the group traditionally classified in HE metrics as ‘most disadvantaged’ (lowest progression to HE).

POLAR can be useful. It’s a helpful way to demonstrate persistent inequalities in access to UK higher education. It’s also a readily-available datapoint that universities can use to target their support before entry and during admissions. In theory, when combined with other pieces of information about a participant, it can be a powerful way to secure greater equity in higher education. The gap between entry rates for the most and least disadvantaged groups (according to POLAR) has narrowed by about 35% since POLAR was first introduced in 2005.

Cracks in the data

But in practice, it’s not quite so straightforward. The main – and well-rehearsed – issue with POLAR is that in densely-populated areas it can be a crude tool for analysis. POLAR3 measures progression at the ward level – in England and Wales this is, on average, around 2,200 households. But in London a ward is around 5,100 households, and it’s highly unlikely that all the 18-19 year-olds in those 5,100 families have had the same life experiences and opportunities. Many urban areas have tower blocks next to mansions.

HEFCE reviewed this problem in 2014 and concluded that the variation within wards is acceptable, even in London (where over 10% of actually-disadvantaged students fall into the most ‘advantaged’ POLAR classification). But universities remain concerned that disadvantaged students may be falling through the gaps.

The second issue is that POLAR measures progression into any university. But the gap in entry rates is biggest and most persistent at high-tariff institutions. In 2017, pupils from POLAR3 quintile 5 were 5.5 times more likely to enter high-tariff providers than those from quintile 1. It’s entirely possible that London’s overall high entry rates mask areas of continuing and deep inequality when it comes to competitive universities.

Does this matter? Different universities use a different basket of measures to target their pre-entry activities and contextual admissions policies. Disadvantage is multi-dimensional, so widening participation teams and university admissions officers treat it as such. We target our support using lots of indicators – which should be able to compensate for these data issues – so why does it matter if POLAR is sometimes a bit ‘off’?

One metric to rule them all, one to bind them

Well, get your bingo cards ready. TEF. OfS. Attainment gap. LEO. Performance indicators. We live in a world of dashboards, report cards and metrics. Because it’s freely available, easily gathered, endorsed by HEFCE and generally understood within the relevant parts of universities, POLAR has become the default measure for disadvantage. Since a student’s parental occupation (NS-SEC) was removed due to concerns over data quality, POLAR is the only HESA performance indicator for ‘deprivation’, and it’s used in NSS and LEO analysis (which then contribute to TEF). Although HEFCE themselves acknowledge that it should not be used in isolation, POLAR has become a pretty universal shorthand for disadvantaged students, as with free school meals (FSM) in schools.

So POLAR does matter, and it’s important to pay attention to the latest reboot. We’ve done some preliminary analysis of the London data at LSE and it’s turned up some interesting findings. POLAR4 uses the snappily-titled Middle Super Output Area (MSOA) rather than ward. MSOAs are more consistently sized than wards: around 3,200 households in both London and the country as a whole. This is good news for London – down from 5,100 so more granular data – but the rest of the country may be at greater risk of the hidden variation uncovered in HEFCE’s 2014 analysis, as the population assessed for progression increases by almost 50% from 2,200.

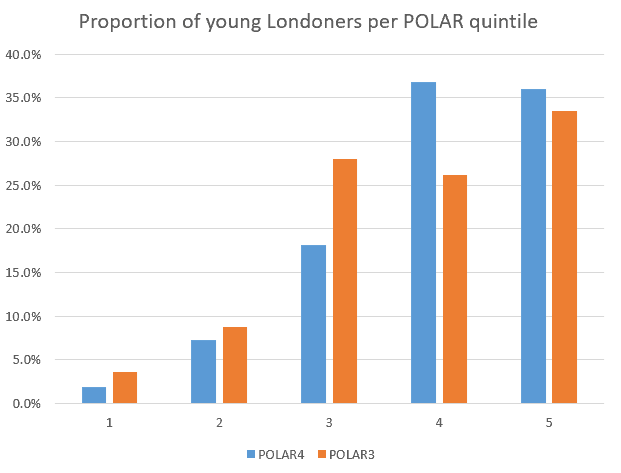

In London, a much smaller proportion of the young population is classified in the three most deprived quintiles using POLAR4, compared to POLAR3. And the proportion of geographic areas in London that are in the most deprived three quintiles has also decreased.

Although other measures also suggest that disadvantage in London has decreased over a similar timeframe, the overall picture is less rosy. Two-thirds of London is classified as more deprived than average according to the Index of Multiple Deprivation (the Government’s core measure of deprivation) – quite a different impression than that given by POLAR, which only measures one dimension of disadvantage.

Source: HEFCE data

At a practical level, this might not matter too much. For instance here at LSE, we will continue to use our basket of indicators to identify students who are most likely to benefit from our successful pre-entry programmes and to target the contextual admissions policy that has contributed to our strong performance to date.

National metro-problems

But at a policy level – it’s a worry. We know that many disadvantaged students choose a local university so that they can continue to live at home. Universities in metropolitan areas with high living costs (especially London) may see a steep decline in their proportion of ‘disadvantaged’ students when the new measure comes in, not because their intake has changed but because the way that intake is classified is different.

On the one hand, it’s right that universities should be measured on a more accurate scale. But the inevitable negative headlines when ‘numbers’ decline isn’t just a worry for universities’ PR teams. The messages – overt and covert – that disadvantaged students receive about universities are very powerful. Tell them that this is an institution where they won’t find ‘anyone like me’ and they simply won’t apply. POLAR4’s system-wide shift towards showing fewer disadvantaged students in urban areas means there could be real damage to progress made in recruiting such students to high-tariff institutions.

Better tools for the job

The sector needs a more nuanced measure of progression – most likely multiple data-sets. Working in a high-tariff, highly competitive institution, I’d like to know more about progression rates to our kind of university from different areas in London – and the country as a whole. I’d like to know more about how other geographic classification systems – CACI’s ACORN, increasingly used in universities, or the open source National Classification of Census Output Areas – can be linked to university progression, to give a more detailed view of disadvantage. And I’d certainly like some recognition from official bodies that the creeping reliance on POLAR as the only proxy for disadvantage needs to stop – that we need time to think about whether it is genuinely fair or useful in the new contexts where it’s being applied.

If POLAR continues to become the proxy measure for ‘deprivation’ in the new risk-based, outcomes-focused regulatory world, there’s a real risk that universities will be forced to play to the single dimension of disadvantage that POLAR allows us to articulate. In the end, it’ll be talented and able students who are left out in the cold.

LSE is putting on the Beveridge 2.0 festival, a series of free events running from 19-24 February, rethinking the welfare state for the 21st century and the global context, including a strand of events tackling Beveridge’s “Giant” of Ignorance.

I share these concerns about POLAR. With the removal of NS-SEC, we’ve lost the only measure of socio-economic status that we had in the sector. This is still yet to be replaced despite several possible alternatives supposedly being developed in 2015, with results due to be published in 2016: http://www.hefce.ac.uk/pubs/year/2015/CL,172015/

This paper by Colin McCaig and Neil Harrison gives a good overview of the issues with POLAR and how it’s being applied: http://shura.shu.ac.uk/7805/1/McCaig_-_an_ecological_fallacy_-_post_publication_version.pdf

As just one example of POLAR falling short as a measure of ‘deprivation’, at my institution (Queen Mary University of London), half of our UK UG student cohort is eligible for a means-tested bursary (that is, they are from a low-income background) and over 40% are from lower socio-economic backgrounds according to NS-SEC. Yet less than 5% of our student cohort is from a POLAR Q1 area, because many of our students are recruited from London – and this last statistic is the only one we’re publicly measured on.

Wow Jess, that is a really stark difference between bursaries, NS-SEC and POLAR, I had no idea! I’m going to look into this at LSE as well: I know we have a lot more students on bursaries than POLAR Q1 students but I’ve not looked at crossover. It would be great to do this across institutions.

I understand the problems with self-declared data about parental background and bursary eligibility, but there HAS to be something better. Especially now that universities and schools are both under DfE, it’d be nice to explore a link with the NPD so we can get FSM or pupil premium data. Even if it’s in retrospect and not available at admissions, it’d be a start.

A really timely and interesting blog Ellen. I have similar concerns about over-reliance on POLAR. For my institution, which is inner-city based, looking at POLAR alone you’d think our student profile is very different to what it is in reality.

I’d like to recreate your analysis for Birmingham, but I’m not sure where to start.

Could the UCAS’ ‘multi-equality measure’ help here at all? It does take a more nuanced approach to disadvantage. https://www.ucas.com/corporate/data-and-analysis/ucas-undergraduate-releases/equality-and-entry-rates-data-explorer#sankey

Really interesting article Ellen. Like your institution, we at NTU use a basket of proxies for socio-economic group / WP, all of which have their own strengths and limitations. One thing that I think is often overlooked is that POLAR was and is a measure for different participation rates in HE and it certainly has its uses for those purposes. It definitely is not though a great proxy for student success, once they’ve actually entered HE. However, HESA’s published non-continuation rates for ‘disadvantaged students’ uses POLAR. Within our institution, we’ve done a lot of statistical analysis of retention rates, failure rates, degree attainment rates and DLHE outcomes. For example, we’ve recently applied the OFFA statistical model for the evaluation of student financial support. This model highlights which factors remain influential to student success (non-continuation of new entrants, degree completion within five years, degree attainment, DLHE graduate outcomes) and using POLAR data in the model as the sole proxy for disadvantage does show a small yet significant relationship; i.e POLAR q1 students are less likely to succeed across the student life cycle when statistically controlling for all other known and available explanatory factors (reflecting HEFCE’s national data report on differences in student outcomes). But….. and it’s a big but, add in another WP proxy (I used a commercially available geo-demographic product which is derived from data relating to very small geographies – little acorns and all that – but even IMD would fare better if previous analysis is anything to go by) and the effect of POLAR is effectively wiped out. In other words, POLAR is a poor predictor of students’ success once they enter university. I very much suspect that this is largely because many students from POLAR quintile 1 are actually from the more advantaged pockets of these overall ‘disadvantaged’ wards (now MSOAs); they are not from disadvantaged areas at all – as cross tabulation with other proxies (based on smaller geographical units) would testify… And this matters because it can create perverse incentives – such as potentially using POLAR as the sole proxy for making contextual offers……