Why did commentators and pundits in the political world make such a hash of prediction in 2016? Here’s a clue: Google ‘credit crunch’ 2009 and you will find hundreds of expert articles explaining in detail why 2008 happened and how it was all inevitable.

Tellingly this cleverness is retrospective. Try looking for accurate predictions written in 2007 or for that matter 1986 or 2000 about the tumultuous year that followed. You won’t find many. One forecast that can be made with some degree of certainty is that the crystal-ball gazers will continue on average to get it wrong most of the time. It’s just that in 2016 there was more to be wrong about. As Victor Borge famously said, “forecasting is always difficult, especially with regard to the future”.

But this class of ignorance is confined not just to journalists, politicians and policy wonks. Human beings, in general, suffer from a deep inability to foresee unexpected events with any degree of clarity and even when we do catch a glimpse, often fail to appreciate the severity of what is to come. I’m not talking about Brexit or Trump as both outcomes were entirely predictable months before they happened. The surprised response says more about the degree of groupthink permeating our universities (and liberal elites generally) than any failure of statistical models to predict what was in effect a simple binary choice.

No, those things to which I refer are more generally associated with the natural world, disasters that cause huge impact on timescales of minutes to months, have global reverberations and are genuinely unpredictable because, like most events that take us truly by surprise, we have no or little understanding of them before they occur.

Universities have never seen anything like this, except perhaps in the aftermath of the Great War. The equivalent of earthquakes, volcanoes and tsunamis do sometimes take on human form in the guise of stock market crashes, terrorist attacks or pandemics. The language of seismic shocks, tectonic shifts and faultlines permeates popular discourse easily enough, but most geoscientists long ago gave up on making strong predictions about the occurrence of natural disasters.

It’s true that research is slowly chipping away at the underlying physics of earth systems to improve our understanding, but their fundamentally random nature ensures the task of accurate prediction is always beyond our grasp.

So, why is quantifying a future outcome with any level of certainty a mug’s game? From a geo-hazards perspective, a major difference between the study of things that are genuinely non-random and those that are is the former tend to follow a Gaussian (bell curve) distribution around an average value (think feet size in a class of school children clustering around the mean).

A normal distribution of this kind is quite common and allows predictions based on probabilities to be made such that 68% of children will have feet size one standard deviation from the mean and 99.7% within three standard deviations. Surely the odds of anything significant happening in the remaining 0.3% are too small to worry about?

Unfortunately, the natural world does not work like this. This is because destructive events in nature (say the number of volcanic eruptions over time) do not obey Gaussian distributions. More generally, natural hazards tend to show fractal distributions where the idea of an average value has no meaning.

So what? Well, it turns out that what Nassim Nicholas Taleb in his book The Black Swan calls the tyranny of the bell curve is responsible in large degree for our failure to make meaningful predictions about, well almost anything, really. The bogeymen in our story are symmetry, in that the past does not provide a faithful reflection of the future (outside the purely narrative), and outliers, extreme far-scale events that occur at random, just when you think it safe to enter the water.

How many international students in 2020?

To see how it doesn’t work let’s look at an example close the heart of many a vice chancellor. Based on historical trends, how many international students will be studying at UK universities in 2020? First some semantics. Predicting and forecasting are sometimes used erroneously to mean the same thing. They are not. A forecast is a judgement made on probability over a period of time. A prediction is a definitive and specific statement about an event occurring. This difference explains why you’ll never hear a weather prediction at the end of the news.

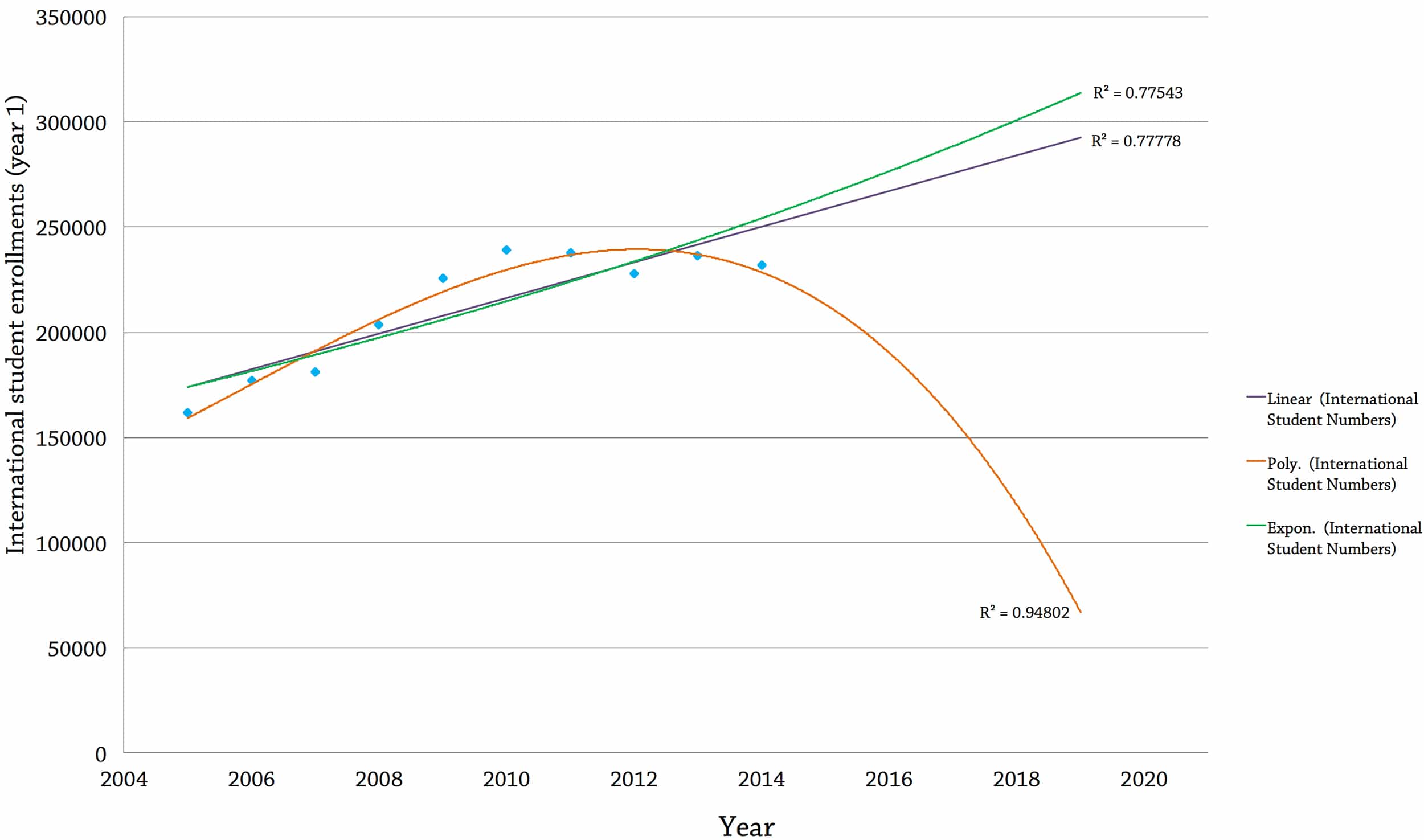

Figure 1. shows the number of international students (non-UK domicile) enrolled onto first-year courses in UK universities between 2005 and 2014. The overall trend is upwards at a rate of 4.3% per year. The question is, will this healthy trend continue? To answer, we can make predictions based on forward modelling of the historical data. This is where the fun begins. The graph shows three curves (predictions) using different statistical techniques, along with their R2 values that measure goodness of fit between the regression line and the actual data points (the higher the R2 value, the better the fit). The first is a simple linear regression.

The prediction here is that in 2020 there will be about 290,000 enrolled international students, an increase of 25% on 2014 numbers worth c. £4.4bn to the sector in annual fees assuming a modest average of £15,000 per student. Convinced? Don’t be. Look at the downwards prediction labelled Poly. This curve is a second order polynomial, matched to the same historical data but with a higher R2 value meaning it fits the data better.

This model predicts that in 2020 there will be fewer than 70,000 first year international students enrolled at UK universities, a 56% reduction on 2005 numbers. But it gets worse. The best fit curve to the data, a sixth order polynomial with an R2 of 99.8% (not shown) predicts just 9,000 enrolled first-year international students in 2020. So the same data, projected forwards to the same point in time give a delta (factor difference between maximum and minimum predictions) of 4.56.

The problem is no unique answer exists to the simple question how many non-UK domiciled students will be studying their first year at a UK university in 2020.

As always, business-as-usual overestimation is a major risk, and universities are certainly not immune. HEFCE allude to this in their analysis of 2015-16 to 2018-19 sector forecasts predicting continued growth. So what can be done? From a natural hazards perspective, the best course of action is to resist the temptation to spend huge effort on improving a given prediction.

Effort instead should focus on optimising ways to mitigate the risks associated with the range of possible outcomes. For universities, this would mean planning for at least a four-fold variation in international fee income by 2020. At Northampton, we thought it too risky to include any projected international student income in our £350 million Waterside financial plan.

What could cause a sudden, steep drop off in numbers in the worst case (polynomial) scenario? A dull response would invoke student visa restrictions which, while undesirable, would be announced in advance making it a) not unexpected and b) manageable within certain limits. No, this calls for a Black Swan. Taking a natural disaster perspective a repeat of the 1918-1920 flu pandemic which killed an estimated three percent of the world’s population and specially targeted healthy young adults would do it. Sounds fanciful? It’s happened already, and that was before higher education became globalised. Next time there may not much in the way of antibiotics to help either.

Figure 1: Graph showing historical and projected enrolled first-year international student numbers to 2020 at UK universities (data from HESA).

Note how each model extrapolation (linear, exponential, polynomial) fits the historical data with a good degree of accuracy. Unfortunately, the predicted range in outcomes (the thing we actually want to know) is highly divergent.

Volatility

To cheer you up I end with volatility. How many times (probably during the same day) have you heard university types use the word ‘volatile’ to describe the sector? Lots I would wager. True, we live in a VUCA (Volatile, Uncertain, Complex, Ambiguous) world, of which universities are a sub set. But what exactly do people mean when they refer to ‘volatility’ and universities in the same breath? Perhaps it’s the sentiment expressed by the US academic Burton Clark in his book Creating Entrepreneurial Universities: Organizational Pathways to Transformation: “The universities of the world have entered a time of disquieting turmoil that has no end in sight”. Hard to argue with, except for the fact these words were written before the start of the new Millennium. Maybe some can predict the future after all.

No-one would disagree it’s a busy time, with externally-imposed changes shaking things up. But I would hazard caution in the use of the word volatile, (a corollary of which would be random in the natural world), at least when it comes to the single most important thing concerning the financial stability of universities – student number applications. To those universities managing swings in applicant numbers over the past few years (mine included) this may seem a provocative statement.

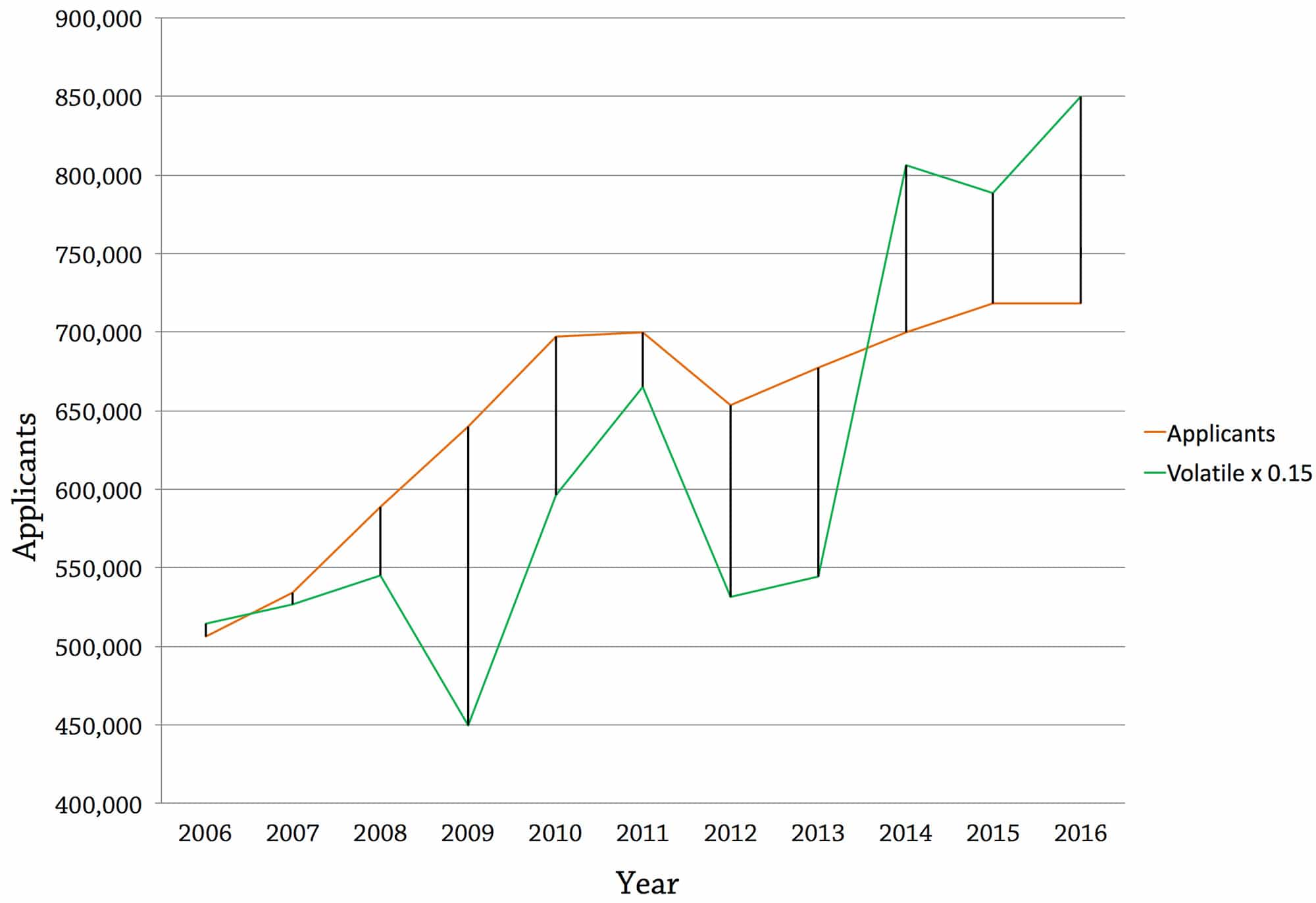

The caveat here is that the ‘law’ of large numbers smoothes out local variations meaning from a sector perspective, student applications over the last decade have been remarkably stable, despite all the changes to funding. Unconvinced? Figure 2 shows two curves. The one in orange is the total number of student applications to UK universities over the ten year period from 2006 to 2016 (the most recent data available from UCAS). Before reading on, stop and ask yourself if you think this trend is volatile.

Figure 2: Actual applicants to UK universities (source UCAS) and modelled applicant trends assuming 15% volatility (If you think this is bad you should see what 40% volatility does).

If you answer yes, you need to get out more. Applications are driven by a time trend worth +5.5% per year but with a one-off downward shift in 2012 of -16.6%. The residual standard error is just 4.7%. By contrast, the green line shows what applications would look like using the volatility of real Stirling returns from DM equities (15%) fitted retrospectively to the same UCAS data.

Simple visual inspection is probably enough to send the most risk tolerant finance director into a flat spin. As far as university applications are concerned, there is currently no significant volatility at the national level. To see what volatility in industry looks like, take a glance at freight companies, oil prices or currency exchange rates. To anyone who remains sceptical, do the calculation yourself then pray that such a fate never befalls us collectively.

Despite as author making no special claim to knowing more about the future than anyone else, I would be surprised if applicant volatility reached anything like the levels seen in other sectors. But then the future might hold something different, a natural or man-made disaster with a real sting in the tail. Unfortunately, there is no way to tell.

This is very interesting because effective risk management can help plan for a number of different scenarios in relation to student numbers. If you analyse the range of alternative descriptions of the future a University can see what could be driving the change and help plan for the uncertain outcomes.

Quite interesting but not as interesting as the image, which is certain of course.

Adrian

Sitting here four years after this article was published, there’s a pandemic on and I think about this article quite a lot.