Students in London pay £9.10 for a pint. Or do they?

Jim is an Associate Editor (SUs) at Wonkhe

Tags

But you’ll forgive me for being suspicious when a firm I’ve never heard of secures media coverage for provider-level stats.

According to this piece in the Falmouth Packet, Falmouth University has been voted as one of the worst universities in the country for mental health support. Sounds bad!

It says that 97 per cent of Falmouth students surveyed said that mental health support at the university was either “poor” or “inadequate”. Meanwhile poor old Solent University gets 100 per cent poor or inadequate, and Bedfordshire 98 per cent.

Maria Ovdii from Ivory Research is on hand to wag the finger at the sector:

University is a challenging time for all students, but the pandemic has seen students isolated from their peers, tutors and the wider learning community so it comes as no surprise that mental health issues are on the rise.”

Now more than ever, it is so important that universities are doing everything they can to support their students, both academically and emotionally.”

There naturally is no trace of this press release on the website of “Ivory Research”, and nor is there any info on sample sizes etc.

What’s that you say? Who is Ivory Research with their “now more than ever” moralising?

It’s an essay mill, and Maria’s the boss.

That would all be bad enough, but post-publication, the Packet contacted the company to enquire about their methodology and survey sample sizes – which you’d assume would be very large to be drawing provider-level data. Not so much:

Survey data now provided to the Packet shows that in fact just one person listed themselves in the survey as being from Falmouth University and that only 1,000 students from across the UK were surveyed in total, spread across 159 universities.

A spokesperson for the company that shared the report said that after re-examining the data it felt the sample size of students per university was too small to depict an accurate picture of specific universities in terms of a list of “worst”, so was no longer promoting it in this way.

In some ways I can sort of rationalise a dodgy survey purporting to show actionable provider-level results emerging from an essay mill. But when another survey purporting to provide actionable insights at the provider and city level emerges from a high-street bank, that’s another level.

In my social feeds yesterday, I first saw a a bit of research-cum-PR piffle from money.co.uk, informing me that:

The average cost of a pint in London is £5 – but many Londoners would probably consider that to be a conservative estimate based on their own drinking experiences in the capital. The average cost of a pint in San Francisco is £5.73, the most expensive on the list according to the study. In Melbourne, Australia, the average price for a pint is £5.21 and in Boston, USA, it’s £5.01, making London the fourth most expensive place to buy a pint in the world.”

Fine. But underneath it, a tweet relating to a press release to accompany the annual NatWest “Student Living Index” told a different story. Under the headline “student cost of living skyrockets”, I was reliably informed that:

London also topped the most expensive place for a pint, with students paying £9.10 per drink, in comparison to Sheffield where students can expect to pay just £3.20.”

Now I know that the price of a pint in London can often shock my friends in the North – but £9.10? What were they drinking?

The rest of the release has some similarly odd or unhelpful findings (“Over nine in ten students used some kind of mechanism to cope with stress”), but what really caught my eye was the provider-level findings. Apparently, Exeter students are the most committed to saving, Cambridge students gave their university top marks for the overall support they were given during the crisis, and Durham received the lowest ranking in terms of value for money – interesting conclusions from a survey that only had 2337 respondents.

Naturally, a further dig ensued, which led to a 92 page PDF of findings for local newspapers to clip and collect. And this is where the fun really starts.

Here we’re told that Glasgow students are most likely to find value in their online education provided by their uni by far (3× more likely than the average), that Oxford ranks the highest for overall student wellbeing, and less than 1 in 5 UK students find managing their money stressful – “especially in Durham, Edinburgh and Birmingham”.

Hmm. Underneath the majority of the graphs, most of which appear to be describing student cities rather than individual providers, we get the following legend:

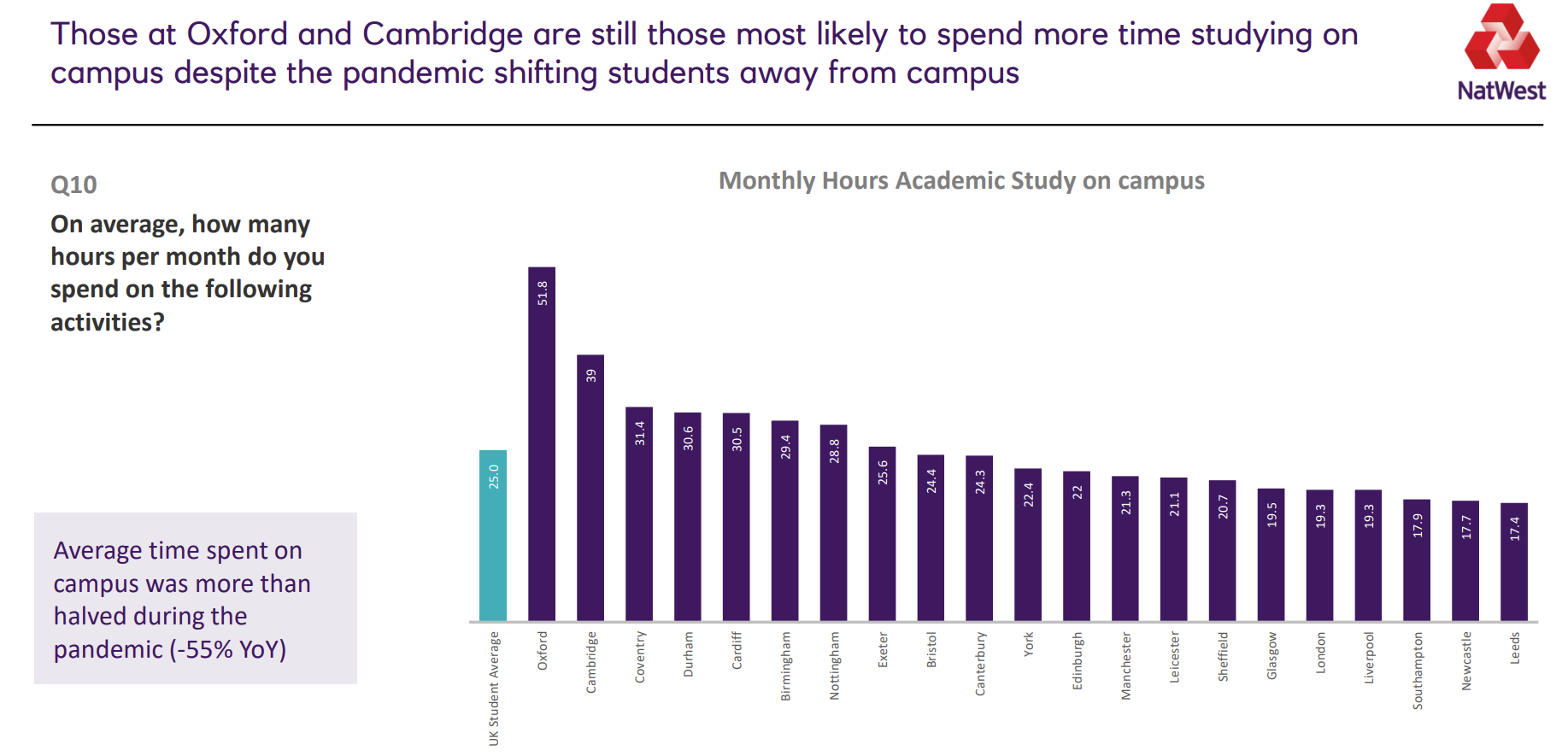

Base: N =2319 respondents; Birmingham (134), Bristol (104), Cambridge (86), Canterbury (66), Cardiff (59), Coventry (120), Durham (51), Edinburgh (93), Exeter (53), Glasgow (84), Leeds (95), Leicester (88), Liverpool (84), London (147), Manchester (105), Newcastle (96), Nottingham (126), Oxford (62), Sheffield (99), Southampton (57), York (62).

OK you’re thinking, these are all about place rather than provider. They must be – even if YouthSight had only asked “Uni of” students in Nottingham or Cardiff, there’s no “University of Canterbury”. So we must be talking about place – city of provider.

But loads of the findings are about university specific issues, and loads of the findings are about expenditure in a year where large swathes of students have been somewhere else. And when you look back at the press release, you find interchangeable mentions of providers and places. For example, one minute you get:

Students at historic universities, namely Oxford, Cambridge, Durham and Edinburgh, rely the most on parents and family income. Combined, these students receive over £350 in additional support from parents or family.”

And another minute you get:

Manchester has reclaimed its spot over Birmingham and London as the most fashion-forward city, spending an average of £54.70 a month on clothes, shoes and accessories – 45% above the national average of £34.50”

There’s pages and pages and pages of this, with baffling graphs like this one:

If this is multi-provider city level stuff, is NatWest really trying to claim that hours spent on campus on multiple courses in multiple providers across a city are consistent enough for sample sizes to be this small?

And if not, what use is a survey on things like mental health provision whose results only identify a single provider in each city for students weighing up going to Nottingham Trent instead of “uni of”? And even then, what does “London” mean, when there’s almost as many providers in London on the OfS register as there are respondents in the survey?